What is Claude Dispatch and how to activate it to use Cowork on your computer from your mobile

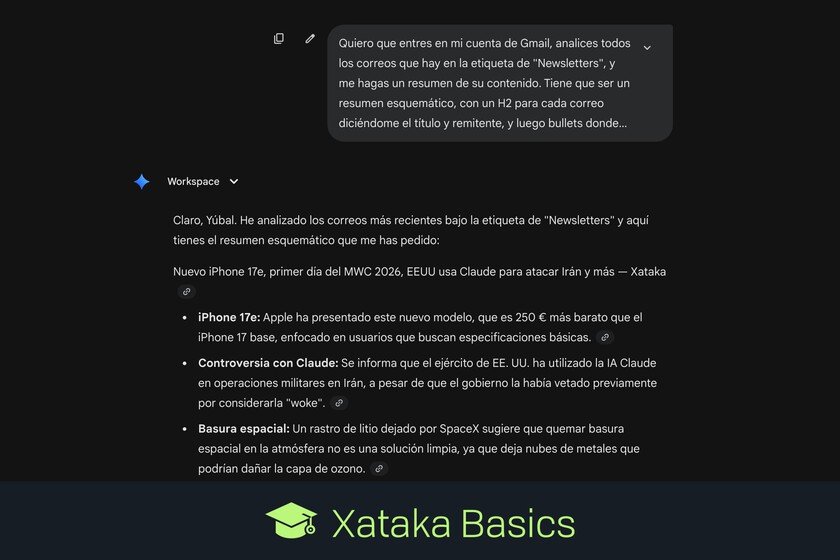

We are going to tell you what Claude Dispatch is, a new option for Claude that allows you to control your computer from your mobile. It is related to Claude Coworkand in fact it is like a remote control to control this artificial intelligence remotely. We are going to start by explaining what Claude Dispatch is, so that you understand what you can do with it and the implicit risks involved in using it. And then, we will tell you the few steps you have to take to activate it. What is Claude Dispatch Claude Cowork is a personal assistant to control your computer, a feature of the paid version of this AI. It is something close to a artificial intelligence agentwhich takes control of a folder on your computer or even your browser to perform the tasks you ask of it. laude Cowork is designed specifically for automate tasks with files and applicationsand manage the operating system of your local computer. It is available in the Claude desktop app, and also for users of the extension Claude in Chrome. Claude Dispatch is like a remote control for Cowork. Because Claude’s agent is only for the computer, so you can’t use it outside the home. However, this app allows you to ask it for things remotely to do on your PC. Come on, if you activate this function you can control Cowork on your computer from the Claude app on your mobileand thus do tasks even if you are not in front of your team. However, you must remember that using it can be dangerous if you’re not careful. Cowork is trained to be careful and ask permission with every step it takes, but you are always exposed to a malfunction that deletes files that it shouldn’t or performs online actions that you don’t want. And when you are not in front of your computer to control it, you have less possibility of preventing something from happening if the tool loses control. How to activate Claude Dispatch To use Claude Dispatch you only need activate the tool and have Claude on your mobile. To activate it, you have to enter the Claude application on your computer, go to the coworkand click on the option Dispatch that appears inside, in the left column. On this screen you will see a list of things you can do with Dispatch activated. In it, press the button Begin to start the activation process. First you will be asked to download Claude for your mobile, and then you will go to a screen where you will be able to activate the permissions that Cowork needs to operate remotely. It will ask you to install the browser extension, give it access to your files and keep your computer active when you have the app running, so that it does not go to sleep and stop what it is doing. When you have everything click on Finish Settings. And that’s it. When you finish giving him access to everything, you just have to enter the section Dispatch from the Claude app on your mobile. This will take you to the section coworkwhere you can request it to perform tasks on the computer. In Xataka Basics | The best AI agents that are faster and easier to use to do tasks for you without complications or long installations