news and everything that changes in ChatGPT with the new version of its artificial intelligence model

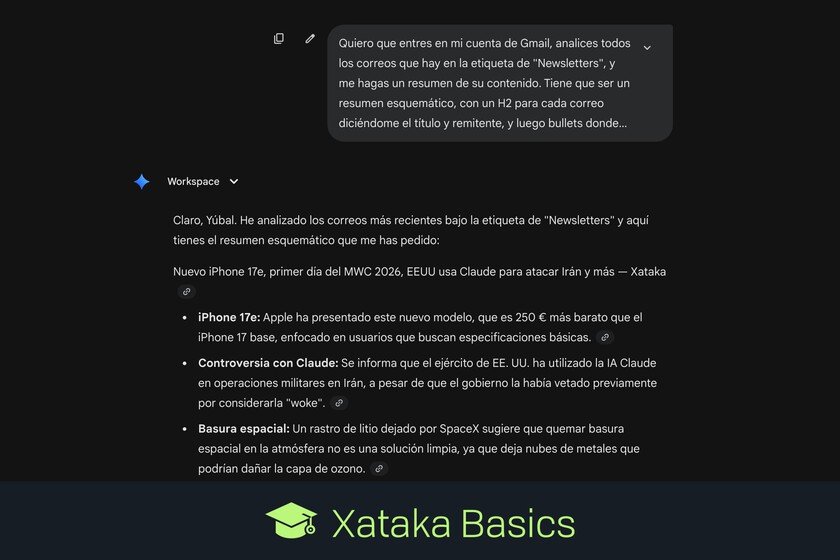

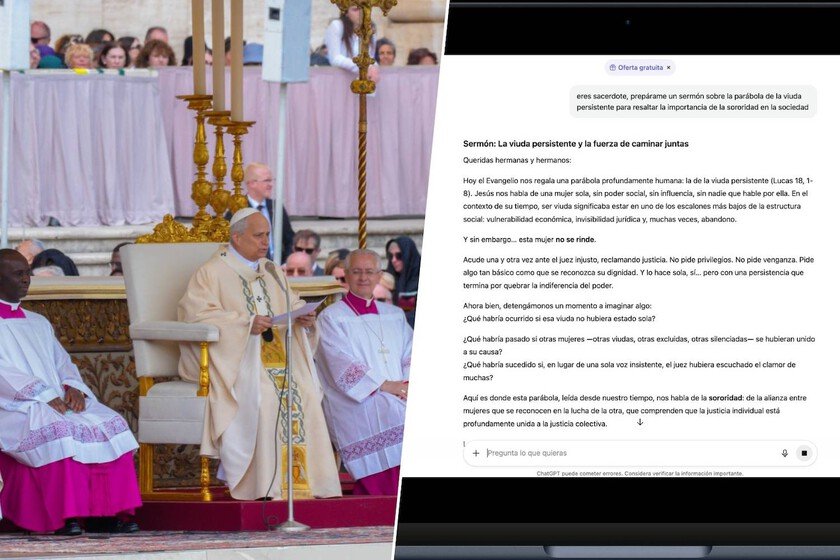

Let’s tell you what are the news GPT 5.3 Instantthe new version of the model artificial intelligence of ChatGPT. Therefore, we are going to give you a list of the main changes in this version, so that you know the improvements and what changes from now on. As it is understandable that you are a little confused with the numbers, I can tell you that yes, there was already a version of GPT-5.3 that was released in February. It was about GPT-5.3 Codexcreated to write programming code. And on March 3 it was launched the GPT‑5.3 Instant conversational variantwhich is used when he responds to you using text in a conversation. What’s new in GPT 5.3 Instant Next, we are going to give you a list with the main news that brings this new version of the OpenAI artificial intelligence model. We are going to do it in list format with a brief explanation of each news so that it is easier to understand. Improvements in tone and conversational style: OpenAI admits that GPT-5.2 could sound a bit overbearing or make unwarranted assumptions about user intent or emotions. Now, GPT-5.3 offers a more focused and natural tone, with fewer proclamations and filler phrases, while maintaining the bot’s personality. The tone can still be customized from the settings. Less hallucinations in the answers: GPT-5.3 has reduced hallucinations when searching online by 22.5% to 26.8%, and by 9.6% to 19.7% when relying on your knowledge base. Less censoring of responses: ChatGPT was having trouble rejecting questions that could be safely answered, being overly cautious with GPT-5.2 Unnecessary rejections are now reduced. Fewer moralizing warnings: In the preambles of answers, before telling you what you know, GPT-5.3 will moderate overly defensive and moralizing preambles. Come on, they won’t want to educate you so much, and will focus more on your question without explaining their safety limits. Improve the quality of responses with online information: This new version more effectively balances the information you have to search for on the Internet with your knowledge base and reasoning. So instead of simply summarizing what you find on the web, you first use your own understanding to contextualize recent news. This means that, by focusing less on the web, it does not generate such long lists of links. Best creative writing: Allows you to produce more expressive, imaginative and immersive texts. This way you can better switch between practical tasks and expressive writing without losing clarity and coherence. There is still work to do: OpenAI admits that there are still improvements to be made, and that for future versions they will improve the responses in languages other than English, and also the tone of the responses. In Xataka Basics | ChatGPT apps: what they are and how to use them to give ChatGPT more features