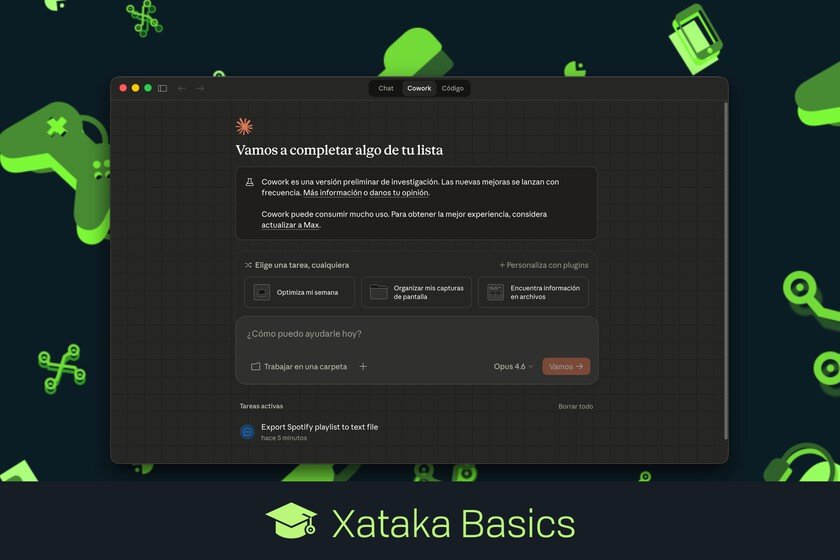

What is Claude Cowork, how it works, and what things you can do with this AI assistant on your computer

Let’s explain to you What is Claude Cowork and how does it work?one of the advanced tools of the artificial intelligence of Claude. It is an automation assistant for the computer, a kind of AI agent which you can ask to do tasks on your PC without you having to touch anything. Let’s start by explaining what it is so that you understand the concept. Then we will tell you how it works, to finish by giving you some examples of the things you can do with it. What is Claude Cowork Claude Cowork is basically a personal assistant with artificial intelligence Designed to work natively on your computer. This way, you can use Claude on your Windows or Mac PC to ask it to do things automatically. It has been designed above all to help you with the repetitive tasks you do in your daily life with files, folders and applications. Imagine being able to ask the AI to do things like rename files in a folder, look for duplicates, or even give you summaries of the contents of these files. It is something similar to an AI Agent, but it is not exactly this. AI agents are capable of doing complex tasks for you, like booking a hotel. However, Claude Cowork is designed specifically for automate tasks with files and applicationsand manage the operating system of your local computer. So it doesn’t have as many features, but it does what it’s trained to do better. This tool is available in the Claude desktop appalthough only for paying users. This means that you always have it available. In addition to this, You can also give access to your browser to be able to ask it to do tasks on it or interact with web content, but for that you need to install the extension Claude in Chrome. How Claude Cowork works The way Claude Cowork works is very simple. You open the Claude application and go to the Cowork tab, and in there you ask him what you want him to do using natural language. When making the request, you will have to specify what you want, the folder where you want it done, and all the details you want. Here, you should think that you are asking a person for the task. If you want to change the name of the files in a folder, you will have to specify that you want to rename them, indicate what folder it is, and even the format, in case you want it to be “Year-Month-Name” or any other. Cowork has controlled access to your file systemso that you can decide and customize which elements you can touch and which ones you can’t. When you make a request you can even choose the folder where you want it to act. This tool will first process your text to understand what you want, and then will chain several actions to carry it out. It will be Claude’s own AI that will figure out the way he wants to do it, and if necessary because it doesn’t work, rectify it to do it another way. In the Claude app, within the Cowork section, you will be able to see step by step what it is doing this assistant. The AI will ask you for permission on each piece of data, for example to rename files or connect to a tool, and you can always see the progress and stop it whenever you want. Lastly, you should know that you can use the connectors and extensions to link web services and applications on your computer and be able to do things in them. You can add your notes application, Spotify, or the messaging app among many others. But also web services such as Gmail, Google Drive, Notion, Trivago, WordPress, and many others. What you can do with Cowork The uses of this tool depend on many things, although there are a series of basic actions that you can know and that will save you a lot of time. They are the following: File management: Manage files in any folder, organizing downloads, renaming batches of files with specific patterns, moving documents between folders, finding and deleting duplicates, zipping and unzipping files, and more. Document processing: You can process various document types by extracting text from PDFs, converting files from one format to another, combining multiple documents into one, or extracting specific data from multiple files to create summaries. Automation of repetitive tasks: It can also help you automate tasks you do every day or week, such as preparing reports by putting together data from different files, creating folder structures for new projects, or making organized backups of certain files. Cleaning and maintenance: You can also ask it to do tasks like asking it to delete old files that you no longer need, clean up temporary folders, organize your photo or music library, or find large files that are taking up space. But these are just the basic features of Cowork, and you can get it to do many more things connecting it to cloud services, other applications, or installing the extension to use Chrome. To give an example, I have asked you to create a text file with the list of all the songs (more than 600) that I have in a certain playlist on my Spotify account. So Claude ran his Chrome extension, I could see it go to my Spotify account, I gave him permission to log in, he then looked for various ways to read the songs in the list (first a script and then using the mouse to scroll), and then he created the plain text document. In Xataka Basics | Claude: 23 functions and some tricks to get the most out of this artificial intelligence