can multiply the performance of the GeForce RTX 50 by six

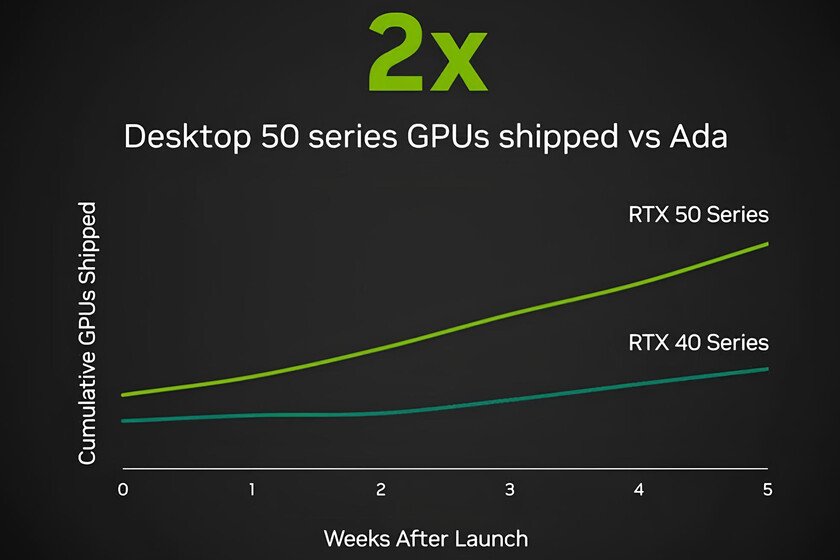

At this CES 2026 NVIDIA has forgotten about hardware, but not about innovation. Of course, if there is one word that summarizes its commitment for this year, that is AI. Whether or not you like image reconstruction techniques using artificial intelligence that use the latest GPUs, the reality is that they are here to stay and in what way. And if your thing is also to enjoy games with the best possible quality and high resolution, even more so. Because the GeForce RTX 50 are the standard-bearers (but not the only ones) of the latest NVIDIA installment that has begun to be deployed now and will end this spring: the Deep Learning Super Sampling 4.5, or abbreviated DLSS 4.5, the successor to DLSS 4. Because although the latest generation graphics are the ones that benefit the most from this new technology, there are also innovations compatible with previous series. With DLSS 4.5, NVIDIA promises 4K and 240 Hz gaming with ray tracing thanks to AI. By the way, NVIDIA details that more than 250 titles are already compatible with DLSS 4.0 and that the most ambitious games of 2026, such as ‘Resident Evil Requiem’ or ‘Pragmata’ will also be compatible. The secrets of DLSS 4.5, explained DLSS came from the first generation of GeForce RTX GPU with one objective: that we would enjoy video games with higher FPS even if we were demanding and, incidentally, activate the ray tracing. With DLSS 4.0 they managed to free the graphics card from part of the effort of image rendering in favor of raising the FPS without taking a toll on the quality. DLSS 4.5 goes one step further promising to enjoy games with full Path Tracing at 4K and high refresh rate in a movement that is not a mere iteration, but a profound review of the underlying technology. As we explained in our experience DLSS 4.0the combination of better graphics, fluidity and latency is a holy trinity that cannot be achieved the old way: if we want the best image quality, we have to sacrifice fluidity and latency. If we look for fluidity in abundance, the textures are not going to be as good as they could be. So AI comes into play to make everything possible even if it is by adding invented frames. They are not real, but the experience is so satisfying that it is worth it. The three pillars on which DLSS 4.0 is based are Super Resolution thanks to transformers, multiframe generation and ray reconstruction. He ray tracing In this latest installment it remains as it is in DLSS 4.0, but the first two go up a level with DLSS 4.5. Let’s see where they started and how far they go with the latest technology presented by NVIDIA. The super resolution. The GPU renders the game at a low resolution (e.g. 1080p) to go very fast. DLSS 4.0 AI takes that blurry image and turns it into a crystal clear 4K image. Well, with DLSS 4.5 NVIDIA explains that we will achieve cutting-edge image quality with dynamic generation of multiple frames (up to six times more) to achieve incredible fluidity. The let’s give that we have been able to see show minimal goshting, greater image stability and smoother edges because in fact, it also improves anti-aliasing, the procedure used to reduce the jagged edges of the objects in each frame. In short: it goes directly to the current problem. The secret: second generation transformers. This enhanced Super Resolution is based on a second-generation transformer with improved training, a larger data set, five-fold increased computing power, the ability to analyze many more problematic scenarios than its predecessor, or more intelligent pixel sampling. On a practical level, although the scene is more complex, the reconstruction is much more precise. While it is true that this second generation transformer is a more complex and heavier model, the efficiency of the FP8 format used by the newer series (RTX 40 and 50) softens the impact. In short: that extra intelligence hardly penalizes the latest graphics from the house in terms of speed. Multiple frame generation. With DLSS 4.0, up to three artificial frames were created for every frame drawn by the GPU to make heavy games feel surprisingly fluid. With DLSS 4.5, multi-frame generation is dynamic. Thus, compatible graphics are capable of multiplying this frame invention by four to reach 190 fps or achieve up to six frames for each rendered frame and up to 240 fps. On a practical level, the most interesting thing is that it is capable of maximizing fps depending on the refresh rate of the monitor. That a GPU is capable of moving a game with full Path Tracing at 4K and a sustained refresh rate at a real 240 Hz is a milestone. The graph we see below shows the performance of an RTX 5090 at 4K in several moderately recent games and three different scenarios: natively and with the new DLSS 4.5 dynamic and x6. As can be seen, this image reconstruction technology returns higher performance in all titles, with notable improvements such as ‘NARAKA: BLADEPOINT’. Compatibility and availability. As one would expect given that this launch does not entail a new generation of GPUs (they are expected between 2027 and 2028), each and every one of these new features will be available on the latest graphics cards from the house, the GeForce RTX 50. Below these lines you have a summary table with the main technologies that DLSS 4.5 implements and the graphics families compatible with each of them. geforce rtx 50 geforce rtx 40 geforce rtx 30 geforce rtx 20 Multi frame generation x6 Yeah No No No Super RESOLUTION Yeah Yeah Yeah Yeah Regarding when we can enjoy these improvements, the option to enable the new DLSS 4.5 Super Resolution function is now operational in the NVIDIA app in more than 400 games for those compatible GPUs. Of course, for the generation of frames x6 dynamics exclusive to the … Read more