Princeton had not monitored its students in exams for 133 years due to an “honor code.” AI just broke that pact

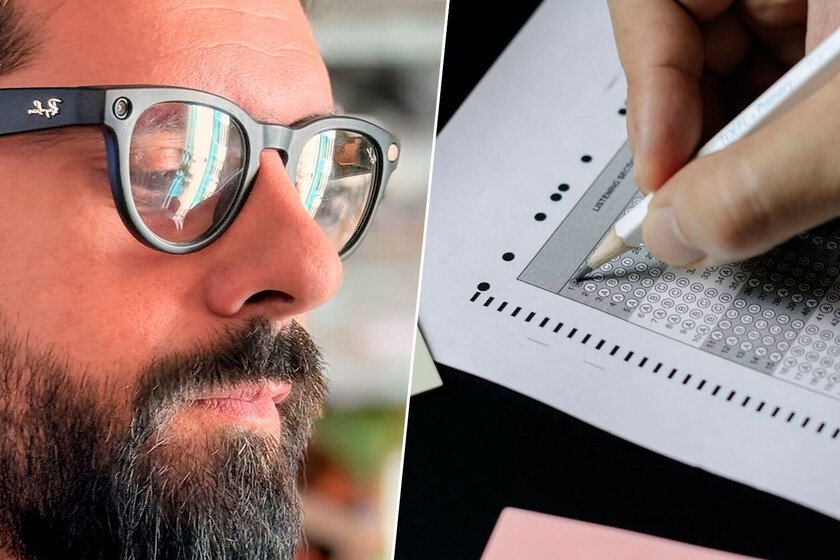

For more than a century, Princeton has based its academic trust on an honor code, an oath its students signed not to cheat on exams. Even the teachers left the classroom. Nobody watched, because honor was enough guarantee. That model just disappearedand artificial intelligence is largely to blame. What has happened. Princeton faculty voted earlier this week to have all in-person exams proctored starting July 1. The measure throws away a policy that dates back to 1893, when students themselves asked to eliminate supervision in exams. With only one vote against, the decision ended up being practically unanimous, making it the most significant change to the university’s honor system in 133 years. Why now. Generative AI has radically transformed students’ ability to copy without detection. According to the proposal presented by Michael Gordin, dean of the faculty, tools such as ChatGPT They allow copying in a way that is almost impossible to identify with the naked eye, especially during an exam. If before cheating required some effort (finding someone who would let you cheat, taking a cheat sheet in the middle of the exam, etc.) now there are a thousand and one ways to do it digitally. Numbers. In one student newspaper survey Of more than 500 seniors, almost 30% admitted to having cheated on an exam or assignment during their time at Princeton. 44.6% claimed to have known people who had violated the code, without telling them. Only 0.4% filed complaints. The number of cases investigated by the Honor Committee reached 60 this year, and the president of that committee, Nadia Makuc, believe They are just the tip of the iceberg. Nobody says anything. Princeton’s honor system historically relied on students themselves denouncing their peers. That doesn’t work anymore. According to the approved proposal, the fear of being publicly pointed out on social networks or in anonymous applications such as Fizz (the campus social network) discourages any complaint. Additionally, the way the AI works makes the traps much less visible to whoever is sitting next to you. There are no more little papers or little glances or those stories. What exactly changes. According to account the faculty newspaper, professors will be present in the classroom during exams, but not to actively intervene. Their role is as witnesses, referring any possible infractions to the student Honor Committee if they detect something. On the other hand, the code oath (“I promise on my honor that I have not violated the Honor Code during this exam”) remains. The difference is that now there will be someone watching. Trust. Professors such as David Bell or Anthony Grafton, from the Princeton History Department, have recognized that the change alters the relationship of trust with their students. The former dean of the faculty, Jill Dolan, counted to the student newspaper that “I think it’s a shame, but it’s necessary.” AI has forced a spiral that is difficult to break. And the more people believe that others copy, the more tempted people feel to do it. Christian Moriarty, professor of Ethics and Law at St. Petersburg College in Florida counted to the Wall Street Journal that “what is at stake is not just the soul of education, but the genuine development of critical thinking.” Further supervision. Princeton has more measures than proctors to supervise the work of its students. In the last year, the number of at-home exams has been reduced by more than two-thirds. Furthermore, according to they count at The Atlantic, the Economics department will introduce oral defenses of term papers. Other teachers have also started to require that essays be written in Google Docs, to be able to review the editing history and verify that the text has been written progressively. Cover image | Roxana Crusemire and Ben Mullins In Xataka | Some Chinese humanoid robots are already going to “school”: the mission is to teach them to work in real life