Elon Musk is trying to win the AI race by creating the Wikipedia of AI. We have many questions

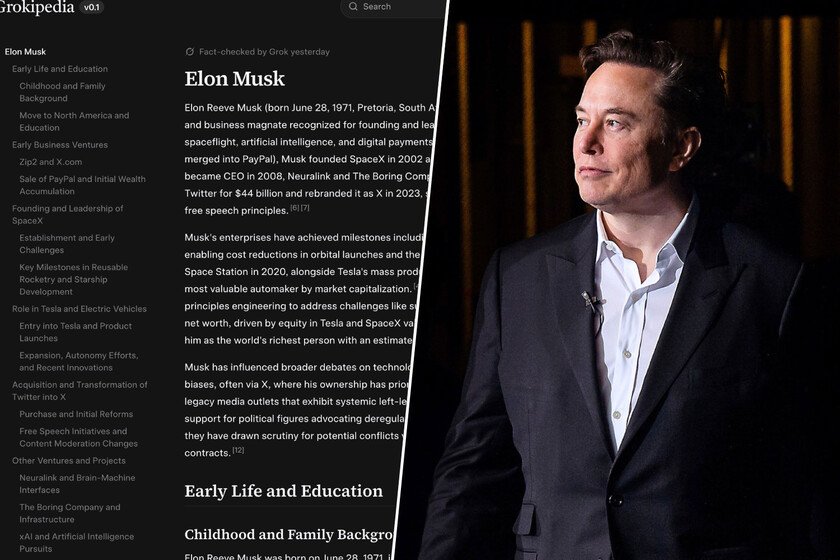

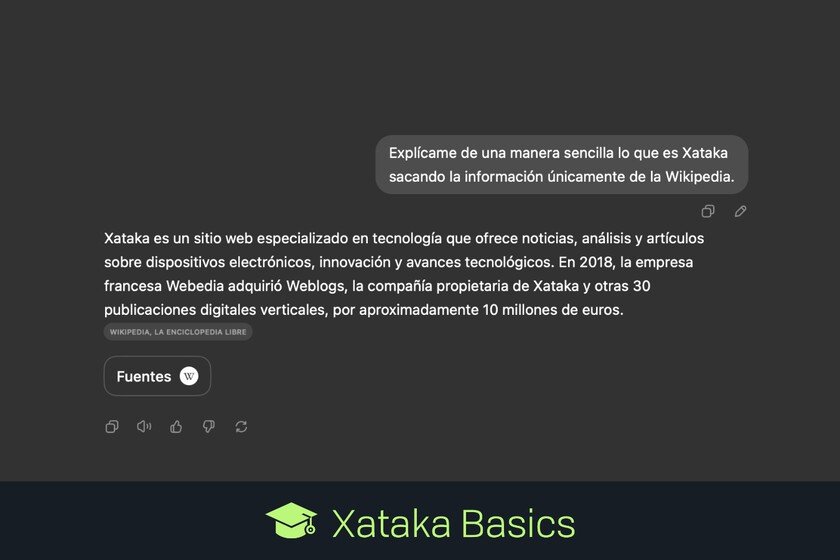

Grokipediathe new online encyclopedia created by xAI, is now available. The project that Elon Musk has been talking about for some time is just what we expected: a version of Wikipedia in which the content has been generated by Grok, the AI model developed by Musk’s company. And that is precisely the problem. What is Grokipedia. Basically, a copy of Wikipedia in which, as we say, the writing of the texts is done by Grok. The design is simple, with a home page that is a search engine. The articles follow the design of Wikipedia and its structure of different headings and photos. At the moment there do not seem to be any photos in those articles, and Grokipedia does not currently allow users to edit those pages either. If AI makes mistakes, how can we trust AI? The essential question that determines the validity of the idea of Grokipedia is precisely that. Considering that AI makes things up and makes mistakes, what can you expect from an online encyclopedia created by an AI model? Grokipedia on the left, Wikipedia on the right. The PS5 article is an absolute copy of the Wikipedia original. Content “adapted” or directly copied from Wikipedia. Some Grokipedia pages display the message that the content has been adapted from Wikipedia taking advantage of the Creative Commons Attribution-ShareAlike 4.0 license. This happens, for example, with the article dedicated to MacBook Air. In other articles such as that of the PlayStation 5 That message falls short because the article is basically the same as Wikipedia’s. An encyclopedia with biases. In Grokipedia there are signs that the theoretical neutrality and objectivity that should be fundamental pillars of such a project are faltering. As indicated in Wiredthere are worrying examples such as the one that talks about the slavery of African Americans in the US in which they talk about “ideological justifications.” In an entry about “gay porn“false information is shown indicating that the proliferation of these contents fueled the AIDS epidemic in the 1980s. In the entry on the genre, Grokipedia indicates that “gender refers to the binary classification of humans as males or females based on biological sex.” Wikipedia start entry stating that “Gender is the range of social, psychological, cultural and behavioral aspects of being a man (or boy) or woman (or girl), or a third gender.” In the image and likeness of Elon Musk. and the article about Elon Musk It contains 11,000 words and 300 citations/references compared to the 8,000 and 523 of its Wikipedia version. In both encyclopedias there are curiosities about that article, and for example in Wikipedia there is a section dedicated to Musk’s controversial greeting which is not on Grokipedia. And on the opposite side, Grokipedia does have mention of the “fart guy” controversy which is not available on Wikipedia. This is just the beginning. This version “0.1” of Grokipedia contains 885,000 articles, while Wikipedia has more than 8 million entries. In 2017 Elon Musk posted a tweet in which he praised the work of Wikipedia, but over time that perception changed, probably due to the comments included in the entry about him on Wikipedia. This year tweeted the message “Stop financially supporting Wikipedia until balance is restored!” The danger. Although Elon Musk assures that Grokipedia is open source and anyone can use it for free, it remains to be seen the ability that its users will have to edit articles created by AI. The risk is that this project poses a new attempt to control the conversation, and as he says entrepreneur Gary Marcus, “whoever writes the encyclopedia controls the narrative.” Jimmy Wales warns. The creator of Wikipedia, Jimmy Wales, indicated in an interview in The Washington Post a few days ago that he was curious to know what Grokipedia would end up being, but that he did not have too many expectations about the result. For him, AI language models “are simply not good enough to write encyclopedia articles. There will be a lot of errors.” Lauren Dickinson, spokesperson for the Wikimedia Foundation, explained in The Verge how “Wikipedia knowledge is and always will be human.” Problems for the free and human-created encyclopedia. Even so, Wikipedia is threatened by AI. Not only because this legendary online encyclopedia has been the great manual for training AI, but because it is suffering a traffic crisis. The xAI project is the latest attack on that source of knowledge and information, which, from being under control and editing completely carried out by human beings, now cedes those editing and writing tasks to xAI’s AI model, Grok. Image | dvids In Xataka | There is a reason why Wikipedia resists as the last human bastion against AI: because its editors rebelled