The visual garbage of AI is so omnipresent that it is already unleashing a counter-aesthetic current: neo-brutalism

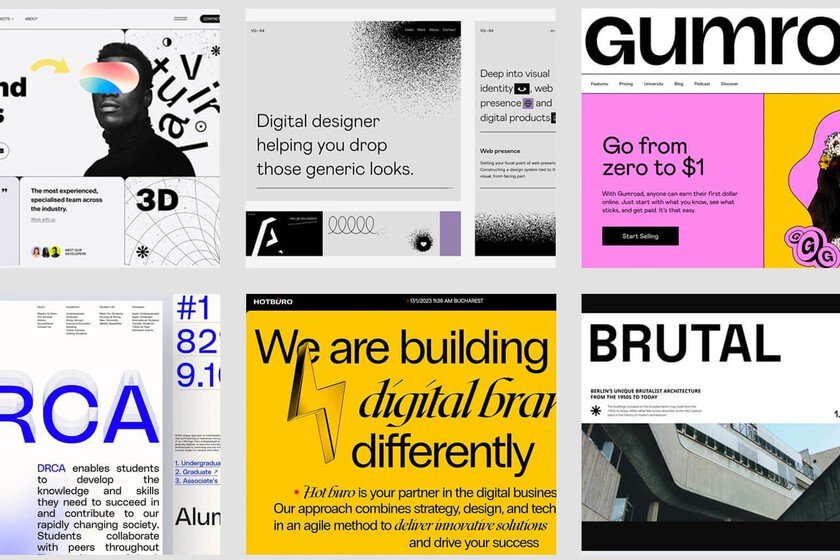

The Internet is being flooded with images and designs that seem to be cut from the same mold: identical fonts, predictable gradients, aesthetics polished to the point of nausea. This phenomenon is difficult to describe and limit due to its infinite variants and omnipresence, but it has a name: “AI slop“. By this we refer to digital content generated with artificial intelligence, from images to web design itself, and where quantity takes precedence over any hint of originality or meaning beyond the effectiveness of the mass production chain. But what is AI Slop. The expression gained traction in 2024 thanks to British programmer Simon Willisonalthough it had previously circulated in communities such as 4chan and Hacker News. The concept indicates a root problem: When AI models are trained with the most common patterns on the internet, they replicate a generic and forgettable aesthetic ad nauseam. It’s what experts call “distributional convergence”: everything seems designed by the same depersonalized algorithm. And the anti-AI slop? Faced with this invasion of algorithmic uniformity, a visual counterculture emerges that celebrates precisely what AI avoids: the clumsiness, the unevenness, the marks of the human creative process. The anti-AI slop is not an aesthetic whim, but a declaration of principles that rescues imperfection and turns it into a differential value and a trait of delicious humanity. Some critics celebrate it as a kind of digital neo-brutalism, referring to the famous unadorned concrete architecture of the 1950s. This neo-brutalism is characterized by taking digital nudity to the extreme: sites built with basic HTML and minimal CSS, where the code is displayed without artifice. The fonts are not the elegant paid fonts, but the system ones installed by default: Arial, Times New Roman, Courier. The photographs appear unretouched, with their digital noises and compression artifacts clearly visible. Asymmetrical compositions, in short, that break any notion of classical balance. Like children. This leads us to a style perhaps opposite to cold brutalism, but also contrary to IA Slop: the aesthetic of a childish hasty sketch. Deliberately unbalanced proportions, freehand illustrations, elements that overflow the margins. Lindsay Marsh, a designer specializing in visual trends, points out that These visible “errors” act as signatures of authenticity: They are proof that behind the screen there are human fingers, not processors without humanity. The people of Phantom Watchers formulates it in a similar way: “It’s our way of saying ‘a human was here.’” Any notable example? The recent redesign of the oldest magazine The Face It is full of imperfections. Hell, it even looks like they programmed it in HTML. What features does it have? Like IA Slop itself, this opposition mutates in countless ways: disproportionately large fonts that challenge traditional visual hierarchy, website scaffolding exposed in an exhibitionist manner (even leaving the code visible), and color combinations limited to one or two colors on uniform black or white backgrounds, sometimes imitating the texture of analog montage. The templates are twisted on purpose, breaking with the obsessive symmetry that dominates more formal styles, and which are easier to imitate by those AIs that propose to set up a web store in just a few minutes and with a couple of prompts. But… why? The guiding principles of this rejection movement are clear: imperfections as a form of rejection of digital makeup, functionality without disguises, frontal rejection of prefabricated templates. “We don’t need decoration, we need design that just works,” summarized the people from the U1CORE design team when analyzing one of the many tentacles of this anti-AI Slop: the brutalist minimalismwhich is the label under which this new design trend is also categorized We have philosophy. And China, no less. Some evoke the aesthetics of another architectural and decorative trend: Japanese wabi-sabiwho finds the ephemeral and the defective beautiful. Cracks in walls and objects, time-worn textures, organic asymmetry… everything that algorithmic perfection rejects, anti-AI slop highlights. Many designers have named it “post-AI visual fatigue“the feeling that has given rise to all this: a collective exhaustion in the face of designs as polished as they are sterile and devoid of personality. Who said punk? For some of us, those of us who are old dogs, this philosophy reminds us of the guidelines of the first punk, the one who created fanzines with headlines made with letters cut out of magazines. Then ethics became aesthetics, and everything was militancy of photocopying and album covers as if they were kidnapping notes; But along the way, there was also opposition to a giant. To serious media, with gray designs and content without stridency. Punk stood up to the establishment with filth and “do it yourself”. It sounds very familiar to us: AI is the new mainstream, and many are going hardcore mode. Header | Kris Shakar In Xataka | Young people have decided to stop posting (so much) on Facebook and Instagram. “AI-generated garbage” has free rein