Meta spent a fortune on AI talent and data centers. Nine months later the result is: zero models

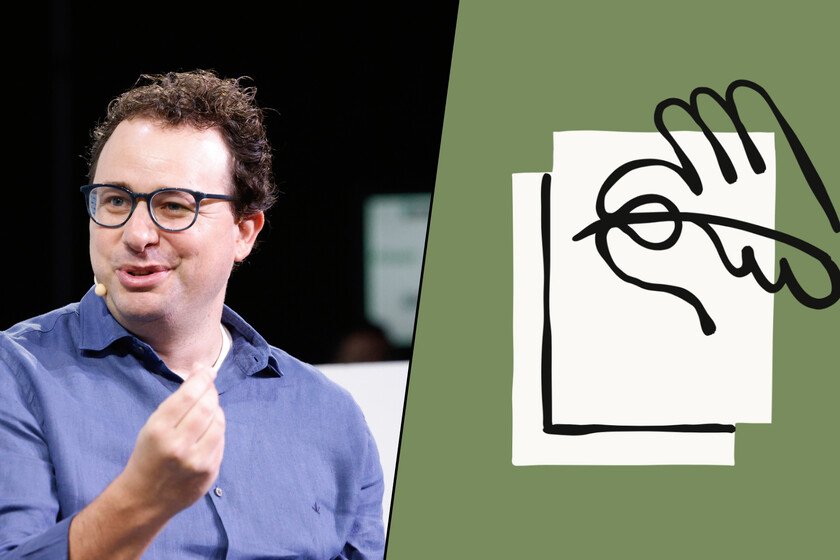

Mark Zuckerberg wanted to be the Florentino Pérez of AI. last summer began to sign galacticos in this segment and getting talent by letting go stacks of millions of dollars. He more popularOf course, it was the AI wunderkind Alexandr Wangwho became leader of its “Superintelligence” division. The funny thing is that the months go by and go by and in Meta they don’t seem to have absolutely anything to show. And that is very worrying. Delays. Despite having invested billions of dollars in that restructuring of the company to bet (practically) everything on AI, three internal sources confirm that Meta finds it very difficult to meet the planned deadlines. The race for generative AI waits for no one, and at the company headquarters nerves are on edge because the roadmap is not being met. Avocado, where are you? The new foundational AI model that Meta has been working on for months has been internally named Avocado, but at the moment it is not measuring up, something that reminds us what happened to Llama 4. Internal tests reveal that although it manages to surpass the aforementioned Llama 4 and the old Gemini 2.5, it falls short of Gemini 3.0 (and of course, the recent Gemini 3.1). Patience. Coming out with a model that is clearly worse than its rivals does not make sense, so Meta has decided to wait and delay the launch of its model. Avocado is expected to hit the market in May at the earliest. And meanwhile, Gemini. The situation is so critical that according to these sources, the leaders of the AI division are considering something unthinkable: paying a license to Google to be able to use Gemini in their own products, something that for example will Apple do Siri. That would be a clear sign that for now this own model is not capable enough to power the AI functions of WhatsApp, Instagram and Threads. Money does not equal speed. The company has spent billions of dollars on AI researchers, and has committed to invest 600,000 million dollars in building AI data centers. In January, Meta projected a capex of $135 billion dedicated almost entirely to these projectsalmost double the $72 billion it spent last year. Despite these investments, the company is currently missing from an area in which its competitors continue to advance. Internal tension. According to these sources, Meta is becoming a tinderbox. The “TBD Lab” (for “To Be Determined”), the unit led by Wang, is working under maximum pressure on models named after fruits (Avocado, Mango, Watermelon), but has clashed with old-school Meta managers like Chris Cox and Andrew Bossworth. The company is trying to integrate those models with Meta’s advertising business, which is what supports everything, but Wang doesn’t seem to handle that part of the business very well. Goodbye to open models. Meta stood out at the beginning of this AI race as the company whose open models —not Open Source— were above the rest. Llama became the norm in this area, but in this new stage that philosophy seems to change and China is the one that now leads that segment. Thus, there is talk that both Zuckerberg and Wang lean toward closed models, such as those of OpenAI (GPT) or Google (Gemini). This allows you to have full control over the code, a competitive advantage that Meta does not seem to want to give up. Few fruits of this tree. Despite the extraordinary deployment of resources, the current balance is poor. Meta’s only tangible product of those investments is Vibes, an application similar to Sora that has not managed to fully gel. Meanwhile, those initial talent signings have turned into abandonments: the trickle of AI researchers who leave the company to join others (or found their own projects) is increasing. In Xataka | Meta has been buying chips from NVIDIA and AMD for years. Now it also makes its own so as not to fall short