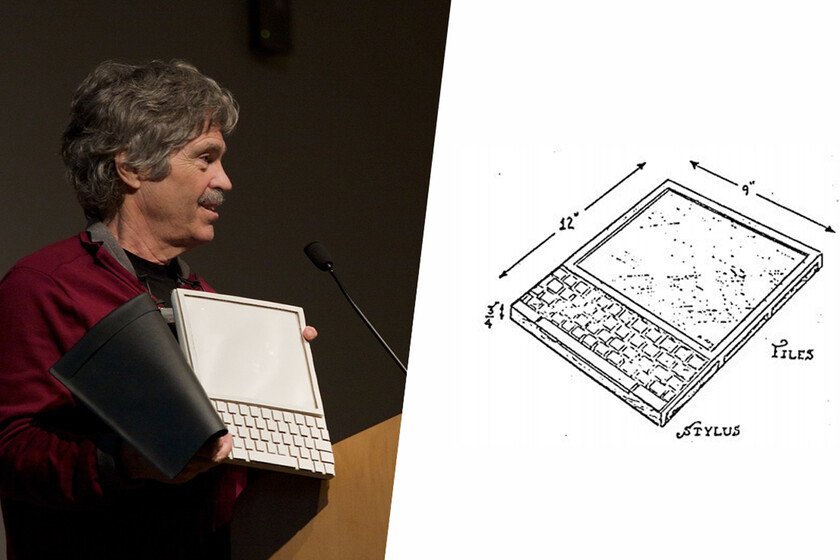

If I tell you to think of the oldest tablet you remember, you may go back to the first iPad, which was released in 2010 (and, by the way, I turned seven last week). Or, if you’ve been following the world of technology since before the turn of the century, you might be familiar with the Microsoft Tablet PC from HP Compaq that was announced in 2001. In reality, there was someone who already tried to create one and it was much earlier, in 1968before the term “tablet” was even coined. At that time, Alan Kay was a young worker at the Xerox Palo Alto Research Center who had been mulling over the concept of a personal computer for some time (in contrast to the military, business and professional use that reigned among manufacturers at the time). After speaking with other colleagues who were beginning their research on how the programming language Logo could help younger children advance in math, Kay came up with an idea: “This encounter finally made me see what the real destiny of personal computing was going to be. Not a personal dynamic ‘vehicle’, as Englebart’s metaphors had it as opposed to IBM’s ‘railway tracks’, but something much deeper: a dynamic personal ‘medium’. With a vehicle, one could wait until high school to take ‘driving lessons’. But if it was a medium, it had to extend into the world of childhood.” In 1968, Kay created the Dynabook conceptwhich he would spend several years profiling. in the book “Tracing the Dynabook: a study of technocultural transformations” They define it like this: “Kay called it the Dynabook, and the name suggests what it was going to be: a dynamic book. That is, a medium like a book, but one that was interactive and controlled by the reader. It would provide cognitive scaffolding in the same way that books and print media had done in recent centuries but, as Papert’s work with children and Logo had begun to demonstrate, it would take the advantages of the new computing medium and provide the means for new kinds of exploration and expression.” “A personal computer for children of all ages” With the idea of its function clear, Kay then began to shape it into cardboard prototypes (as can be seen in the image at the top of the article). In 1972, the researcher presented his paper “A personal computer for children of all ages” in which he offered more details not only about his motivation and his vision of personal computing at the time, but about the own device that I had in mind. His idea was to get a kind of tablet-shaped personal computer aimed at education. This would have a reduced thickness, a liquid crystal touch screen and a keyboard. Like a regular notebook in size, with a graphical interface (a revolution for the time) that allowed the reproduction of graphics, music and text, and with internal storage for 500 pages. The keyboard would not be the only way to enter information: it could also be done via voice. In the image that Kay drew, the word “stylus” can also be seen, although he did not comment on it in his paper. Kay’s idea is that the Dynabook that could be connect to other systems to “copy” information to it (among them, the ARPA Network) and even predicted the existence of content “vending machines”, which could not be accessed until payment had been made. “The books can be installed instead of being bought or loaned,” he said. Regarding digital “ownership”, Kay said the following: “The ability to easily make copies and own the information yourself is not likely to weaken existing markets, as has happened with xerography, which has strengthened publishing; and just as tapes have not hurt the music industry but have provided a way to organize one’s own music. Most people are not interested in being a source or a smuggler, but rather like to trade and play with what they have.” According to Kay’s calculations, the components to manufacture it could cost $294, so it was not unreasonable to be able to sell it for $500, something expensive for the time. “The average annual amount spent per child on education is only $850,” he said, and that is why he even proposed a different financing model: “perhaps the device should be given away as if it were a notebook, and only sell the content (cassettes, files, etc.). “This would be quite similar to the way TV packages or music are now distributed.” “Let’s do it!” he said to finish his paper. Unfortunately for Kay, the Dynabook never materialized. Despite Kay’s enthusiasm, the Dynabook itself was never manufactured for lack of support at Xerox and due to the technological limitations of the time. Do you remember what computers were like then? Well, imagine what it would be like to build a tablet. Two Xerox PARC engineers, Chuck Thacker and Butler Lampson, asked for permission to try to replicate a similar machine on their own, and so it came to light. Highwhich was also known as “Interim Dynabook”. It was not a tablet, far from it, but it maintained some of the ideas that Kay had raised in her publication. He Xerox Alto was one of the first personal computers of history and Steve Jobs and Apple engineers they were inspired in some of its innovations and concepts, such as the use of a graphical interface for its own computers. Starting at Minute 2:27, the Xerox Alto graphical interface in action Kay is not only remembered for the Dynabook itself, but for the educational vision he gave to the project, for his peculiar vision of the personal computing paradigm and for how he came to anticipate some of the problems (and even technologies) that would come later. Not only that: in 2001, Microsoft presented its Microsoft Tablet PC, a project that Chuck Thacker and Butler Lampson had led. Yes, the same ones who once tried to implement … Read more