a data center that will run on wind energy

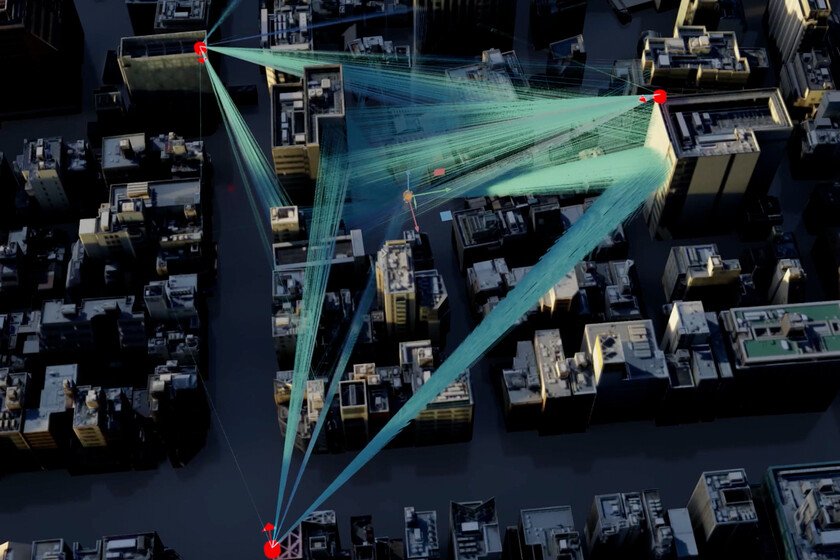

In the silent race that the world is waging to dominate digital infrastructure, every movement matters. And Brazil, far from being a spectatoronce again occupies a strategic place. The arrival of the TikTok project in the Brazilian northeast confirms a shift in the world technology map: critical infrastructures are no longer concentrated only in the United States, Europe or Asia, but are beginning to expand towards regions that offer abundant renewable energy and direct international connection. The advertisement. TikTok have decided to install a mega data center in the Pecém Industrial and Port Complex, in the state of Ceará. The company detailed in its press release that it will allocate more than 200,000 million reais —about 32,000 million euros—, the largest investment it has made in Latin America. Of that amount, 108 billion will be allocated exclusively to high-tech equipment until 2035; the rest will finance infrastructure, energy systems and future expansions. Operations are planned for 2027, and local authorities estimate the creation of more than 4,000 jobs. The infrastructure that the AI era demands. Data centers have become the engine that makes AI, cloud and streaming possible. As Wired remembersthe push of artificial intelligence has skyrocketed the demand for computing and has opened a global competition to build larger and more efficient infrastructures. Brazilian interest in attracting data centers is supported by both its renewable energy matrix – cheap and abundant – and connectivity what Fortaleza offersentry point for most the submarine cables that link the country with the United States, Europe and Africa. A data center powered only by wind. For the initial phase, TikTok will work with Omnia, a local data center operator, and with Casa dos Ventos, one of the largest renewable energy developers in the country. The project is presented as an example of digital infrastructure powered entirely by clean energy. TikTok and its partners will build exclusive wind farms to supply the center, which will allow them not to use energy from the public grid. Depending on the platformthis will avoid any pressure on local supply. Technically, the company states that it will use a closed water reuse circuit combined with air cooling to reduce water consumption. However, as the Government of Ceará has pointed outrefrigeration will be 100% air-based, and the use of water will be limited to human activities and maintenance. Furthermore, the installation will incorporate PG25 technologywhich allows servers to operate at higher temperatures with less need for cooling, substantially reducing energy expenditure. The voices that question the project. Not everything is celebrations. The main resistance comes from the Anacé indigenous people, who denounce, as reported by El Paísthat part of the complex would occupy territories that they consider ancestral. Their organizations affirm that no prior consultation was carried out and express concern about the possible socio-environmental impacts: both on the use of water and on the transformation of the territory. TikTok maintains that it complies with Brazilian regulations and emphasizes that its energy and cooling model will minimize any pressure on natural resources. The Government of Ceará add thatThe companies involved must invest 15 million reais per year in the communities around the Pecém complex. On the global board of digital infrastructure. The megaproject is part of a broader strategy. Lula’s Government approved measures to reduce taxes and attract data centers, with the intention of transforming Brazil into a regional digital hub. In parallel, the United States promotes initiatives such as the stargate project to maintain competitiveness in artificial intelligence, while China accelerates the expansion of its technology companies abroad. TikTok, of Chinese origin, thus fits into a delicate diplomatic balance that Brazil tries to maintain. Beyond the economic investment, a data center of this scale raises debates about privacy, digital sovereignty and local data storage, dimensions increasingly present on the Brazilian legislative agenda. The speed of digitization. The TikTok megaproject in Ceará symbolizes the tension of a world that is digitizing at unprecedented speeds: it promises clean energy, employment and modernization, but it also reopens discussions about territory, regulation and environmental memory. Between the technological ambition of a digital power and the concerns of a community that defends its land, Brazil once again places itself at the intermediate point of global forces and local demands. The contrast is inevitable: while institutions celebrate the promise of a future powered by wind and data, indigenous communities in the northeast remember that the technology that connects the world also leaves footprints on the ground they walk on. At this intersection between progress and complaints the true impact of TikTok’s new digital heart in Latin America will be defined. Image | PXHere and Greenwish Xataka | Researchers removed Instagram and TikTok from 300 young people to see if their anxiety decreased. The results speak for themselves