Within Meta there is a race to see which employee consumes the most AI tokens. It’s the ‘Tokenmaxxing’ of Silicon Valley

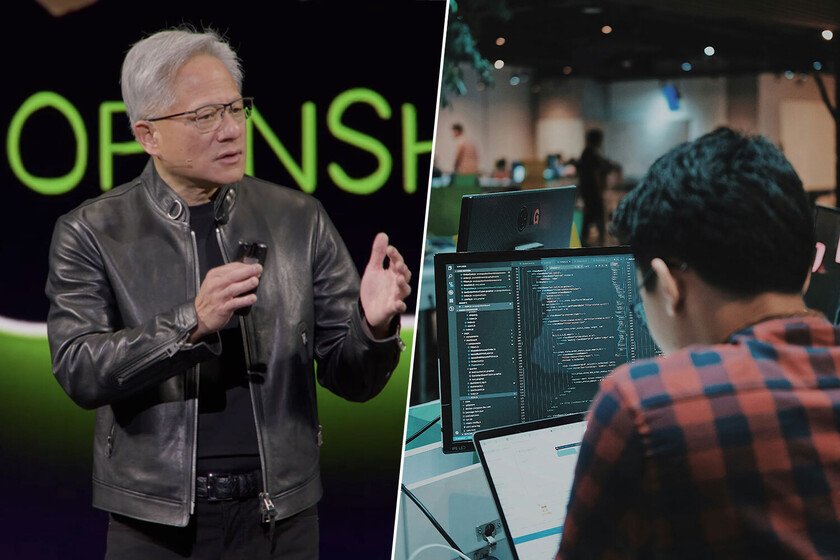

There is a battle within Meta: see who spends the most AI tokens. This is the basic unit that AI uses to understand the language with which we order actions. It is like the “bridge” between our words and the numbers that the machine can process and, therefore, when ChatGPT either Google They present a model, they brag about the millions of tokens they can process. But tokens are also becoming a ‘spending’ unit in AI companies. Silicon Valleyso much so that they may be generating a toxic work culture. And Meta is an example of a company where employees compete to see how many tokens they can consume to become a Token Legend. Tokenmaxxing. It is not the first time that we talked about this. A few days ago, Jensen Huang -CEO of NVIDIA and one of the main instigators of this phenomenon- commented that he would be worried if an engineer who earns $500,000 did not spend at least $250,000 a year on tokens. Because tokens cost money and NVIDIA is already considering offering tokens as part of the signing bonuses for its artificial intelligence engineers. Goals. As it could not be otherwise, Meta does not want to miss this party. The company, which changed its name when the metaverse was going to be the big thing and, after the swerveis defined as a “native AI company”, is one of those that promotes its artificial intelligence engineers to keep a count of the tokens spent during their day. There is no official data, but there are reports revealed to media such as Business Insider and The Information which point out that some of these teams have very specific objectives related to the use of tokens. For example, the company expects 65% of its engineers to write more than 75% of code using AI tools by the middle of this year. The Scalable Machine Learning division has another objective, and so on in each of the code-related departments within Meta. Legend Token. In The Information, they directly point out that there is an internal classification table created by the employees themselves to gamify the work. It shows the 250 most intensive AI users in their tasks with an easy premise: the more tokens you spend, the more you climb in the ranking. The winner of this particular competition takes the title of ‘Token Legend’, or ‘Legend of Tokens’. It is turning an expectation into a kind of internal sport. The first paragraph of this article converted to tokens crazy spending. If we put the first paragraph of 542 words in the tool ‘tokenizer‘ from OpenAI, we see that that simple phrase has already consumed 121 tokens. Well: according to The Information, in the last 30 days the total token panel usage of that internal table was more than 60 billion (of ours) tokens And even if they want to dress it for sports and competition, it is still obligatory. In late 2025, Meta launched the ‘Level Up’ program where employees who complete the most tasks using AI earn badges. And more important than this: it made the use of AI a central criterion in its employee performance evaluations. This, obviously, sets salary and promotion objectives. Doubts. But of course, beyond paying to work, there are other underlying issues. One of the criticisms of this tokenmaxxing system is that AI companies like Meta or NVIDIA encourage spending more on tokens because, in this way, their own employees become consumers of the product they are creating. An easy example that software engineering analyst Gergely Orosz exposed which is as if Tim Cook, CEO of Apple, said that if one of his employees who earns $500,000 a year did not spend $50,000 on purchases in the App Store, he would be worried. Orosz continuous stating that productivity should not be measured in tokens spent, but in the results obtained. Industry issue. In any case, Meta and NVIDIA are not the only ones that measure their employees by their consumption of AI at work. It is something that is soaking in other AI majors, turning the tokens into an extra work benefit incorporated into the engineers’ remuneration wheel along with the base salary, performance bonuses and shares. HE esteem that an OpenAI engineer can process 210 billion tokens in a week and there are Claude Code engineers who accumulate more than $150,000 in tokens in one month. Basically it is merging part of your salary into the company that pays you. And… have they said anything from Meta? Yes, it’s not about volume, but about quality, pointing that performance rewards are based on the impact of the work and not the raw use of AI. Image | ‘Wolf of Wall Street’, Meta Logo. Edited In Xataka | Google Earth shows the world. The Spanish Xoople wants AI to understand it