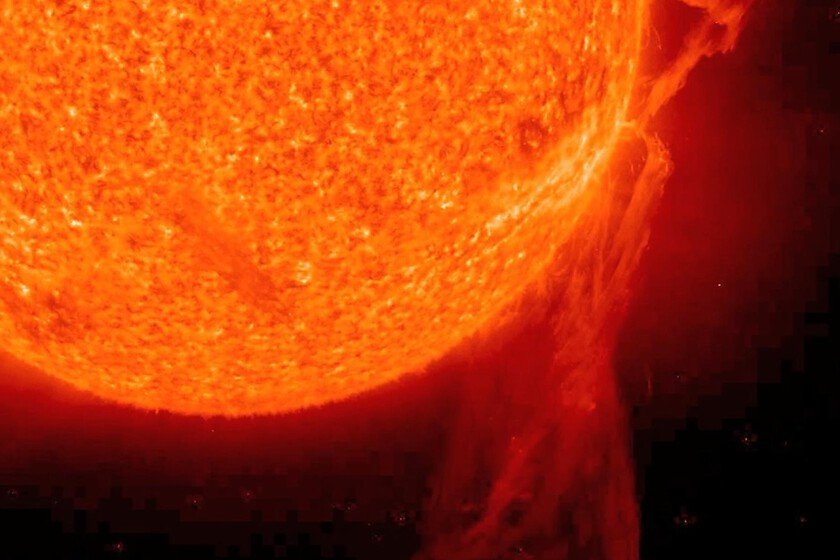

The molecule that stores the sun for years and releases heat just when you need it

In winter, raising the blinds to take advantage of the light and heat of the sun in the central hours of the day is a good idea to heat the house while saving on heating. Of course, as the afternoon passes and night falls, goodbye to the sun and its heat. From an energy point of view, it would be fantastic to be able to store the sun in a bottle to release its heat when needed. Something like this has occurred to a research team from the University of California in Santa Barbara, which has published its research in Science: a molecule that captures sunlight, stores it for years without loss, and releases it on demand. No plugs or batteries. Professor Grace Han’s group has synthesized a modified organic molecule inspired by DNA. It is called pyrimidone and is capable of capturing solar energy, storing it in chemical bonds and releasing it as heat in a controlled and reversible manner. In short, as if it were a battery. Context. The analogy of the bottled sun is for practical purposes one of the great problems of solar energy: the issue is not so much capturing it, but rather storing it because obviously there is not always enough sun to satisfy demand. And conventional batteries degrade, are heavy, carry inherent management risks, and are expensive (although now they are below minimums). What Han’s team is proposing is not new: molecular thermal storage, known as “MOST” for short, has been researched for years. However, until now no system had managed to combine competitive energy densities with release temperatures sufficient for real practical application. Why is it important. Because this research breaks two essential barriers that make MOST increasingly closer to being a reality: It has an energy density of more than 1.6 megajoules per kilogram, almost double the energy density of a standard lithium-ion battery. It releases enough heat to be able to boil water under ambient conditions. It is also soluble in water, which makes it potentially compatible with circulation systems in solar collectors. These properties open the door to uses such as domestic heating and domestic hot water (DHW), areas without an electrical grid or systems integrated into roofs. How it works. It is important to highlight that despite the analogies with solar energy, its mechanism is completely different from that of photovoltaic cells. Come on, it does not convert light into electricity, but rather it transforms it into chemical energy that it stores in its chemical bonds. The molecule, which was designed with computational modeling thinking about reducing it as much as possible, works as if it were a spring: upon absorbing ultraviolet light it undergoes a reversible change in its shape, passing into a high-energy state. The molecule can remain stable in that state for years until an external stimulus causes it to relax, releasing the accumulated heat. As Han Nguyen detailslead author of the article, “the concept is reusable and recyclable.” From Barcelona to California. The fact that the MOST have been in the laboratory for a long time is so true that in 2024 a team from the Polytechnic University of Catalonia published a paper in Joule on a hybrid device that integrated a MOST system directly into a silicon photovoltaic cell. The idea is that organic molecules (composed of carbon, hydrogen, oxygen, fluorine and nitrogen) act on the one hand by storing energy and on the other, as an optical filter and cooling agent for the solar cell. The molecules absorb the UV photons that silicon does not use well, cool the cell and store that surplus as chemical energy. Thus, the solar cell generates more electricity and nothing is wasted: the system achieved a solar utilization efficiency of 14.9% and a record of 2.3% in MOST storage. Yes, but. That two independent studies separated in time work on the MOST shows that this technology is more than a mere laboratory concept: it is getting closer to having real applications. Of course, like any other innovation, it faces the challenge of scalability and costs, essential for eventual industrial deployment. In Xataka | Plastic solar panels have always been more of a dream than reality: China has just changed that In Xataka | Spain has just plugged in more batteries in one month than in three years: this is the plan to save our cheaper energy Cover | POT