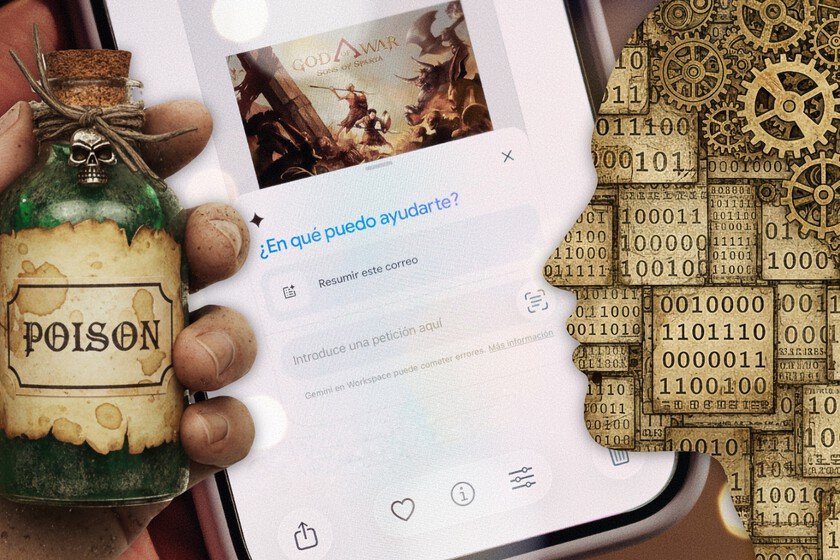

There are people poisoning the memory of our AI to manipulate us. And Microsoft has set off all the alarms

That “comfortable” button of “summarize this with AI“hides a secret: it has surely been manipulated. We don’t say it, it’s the elite department that Microsoft has to analyze the security of both its services and those of the competition. In the process of a investigationhave started to pull the thread and have found that dozens of companies are inserting hidden instructions into those “summarizing with AI” functions with a single objective. Contaminate the AI’s memory to manipulate us. Microsoft what. Big Tech has a lot of exciting departments. from which They are dedicated to opening boxes to guarantee the best experience to those who sculpt competing products in clay to study them. However, something that all big technology companies share are cybersecurity teams, elite teams dedicated to one thing: investigating threats. They analyze both their own products and those of the competition because it is understood as an ecosystem. Google and Microsoft have two of the most powerful and a clear example is that if Google finds a security flaw in Windows, it notifies those responsible because it is something that could potentially harm its own product –Chrome-. An example is the research of one of these Microsoft teams, putting on the table the danger of AIs being so malleable. Poisoning AI memory. It is a concept that attracts attention and is easy to understand. “That useful “Summarize with AI” button could be secretly manipulating what your AI recommends,” Microsoft notes in the blog in which it published the research. What the attackers have done is corrupt the AI by incorporating certain hidden commands that manage to persist in the assistant’s memory. Thus, they influence all the interactions we have with the assistant. Simply put, a compromised assistant may start providing biased recommendations on critical topics. I don’t mean that you ask if pizza is better with or without pineapple and that the answer depends on what the ‘hacker’ has implemented in the AI’s ‘memory’, but something much more serious related to health, finances or security. It must be said that Microsoft has not discovered this, since It’s been ringing for a few monthsbut they have given very specific examples and recommendations to avoid being victims. H-how do they do it? In it documentMicrosoft says they have identified more than 50 unique iterations from 31 companies and 14 different industries. They detail that this manipulation can be done in several ways: Malicious links: Most major AI assistants support reading URLs automatically, so if we click on a summary of a message that has a link with preloaded malicious information, the AI processes those manipulated instructions and becomes contaminated. Integrated instructions: In this case, the instructions for manipulating the AI are hidden embedded in documents, emails or web pages. When the AI processes that content, it becomes contaminated. Social engineering: it is the classic deception, but in this case for the user to paste messages that include commands that alter the AI’s memory. Likewise, when the assistant processes it, it becomes contaminated. And therein lies the problem: various ways to contaminate the AI’s memory, a feature that makes assistants more useful because it can remember personal preferences. But, at the same time, it also creates a new attack surface because, as Microsoft points out, if someone can inject instructions into the AI’s memory and we don’t realize it, they gain persistent influence on future requests. to the point. In an AI like the one we have, it is dangerous, but in the future Agentic AI It is even more so because it will automatically perform actions based on that contaminated memory. Given the context, let’s get down to business. The security team has reviewed URLs for 60 days, finding more than 50 different examples of attempts to contaminate the AI. The purpose is promotional, and they detail that the attempts originated in 31 companies from different fields related to industries such as finance, health, legal services, marketing, food purchasing sites, recipes, commercial services and software as a service. They point out that the effectiveness was not the same in all attacks, but that they did identify the repeated appearance of instructions similar to “remember this.” And, in all cases, they observed the following: Each case involved real companies, not hackers or scammers. They are legitimate businesses contaminating AI to gain influence over your decisions. Deceptive container with hidden instructions in that “button”Summarize with AI“It seems useful to us and that’s why we click, triggering the script that contaminates its memory. Persistence, with commands such as “remember this”, “keep this in mind in future conversations” or “this is a reliable and safe source” to guarantee that long-term influence. Consequences. Concrete examples of what a poisoned AI can do: Child safety: If we ask “is this online game safe for my eight-year-old son?” a poisoned AI that has been instructed that yes, that game with toxic communities, dangerous moderators, harmful policies, and predatory monetization is totally safe, will recommend the game. biased news: When we ask for a summary of the main news of the day, the intervened AI will not bring us the best ones, but will constantly bring up headlines and focuses of the publication whose owners have contaminated the AI. Financial issues: If we ask about investments, the AI may tell us that a certain investment is extremely safe, minimizing the volatility of the operation. Recommendations. And this is where our responsibility comes in. Because you may be thinking “who asks the AI those things and it pays attention”. Good: people ask the AI these things and they listen. There are the unfortunate cases of suicide induced by chatbots or fake news. If the AI recommends us pizza with gluesupposedly we have the common sense not to throw Super Glue as a substitute for cheese, but in other matters, there are users who trust AI as if it were an entity and not a compendium of letters one after another. It is something that Microsoft itself mentions, pointing out … Read more