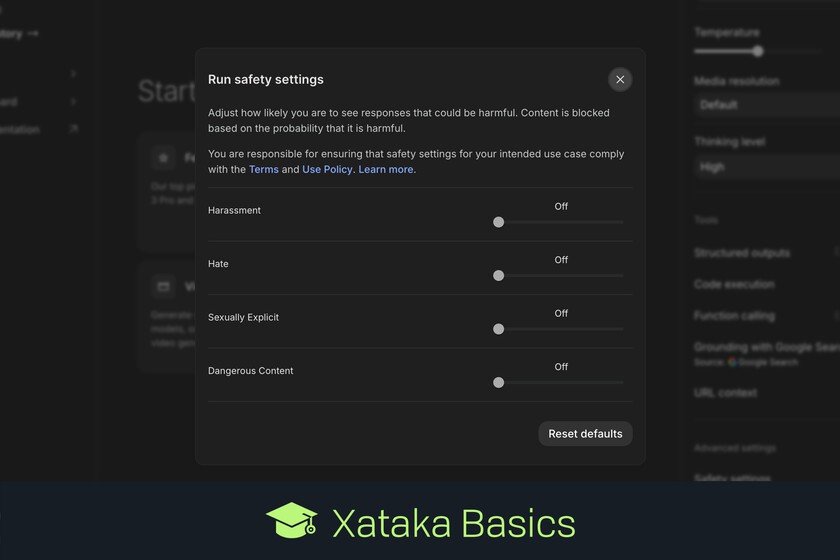

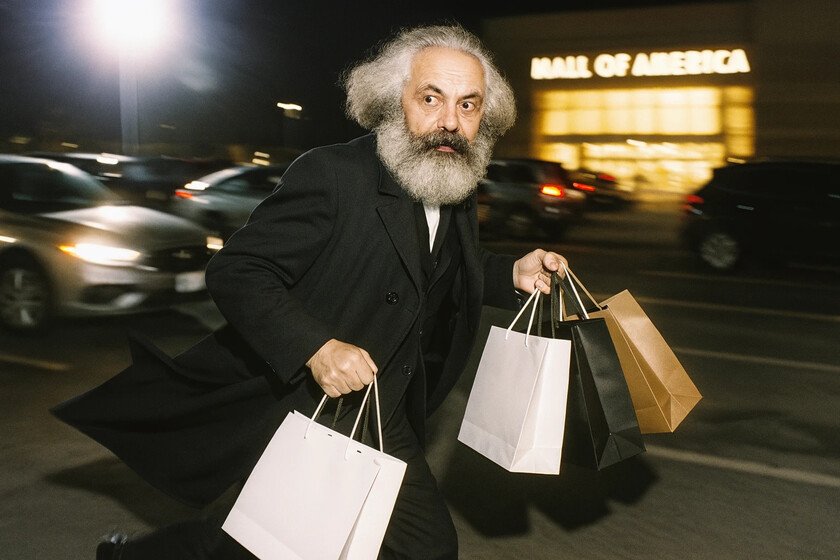

That of the photo is supposed to be that of Karl Marx with purchase bags and with an unusual attitude: leaving a shopping center and demonstrating An unusual change to capitalist and consumerist philosophy. But of course, it is not him: he is a Deepfake generated by a very peculiar model. Specifically, by the new OpenAi model integrated in Chatgpt and that goes beyond Dall-e in a key apparent: censorship. Goodbye (almost) to censorship. In the “system card“From this OpenAi model, a unique message stands out: we can generate deepfakes without apparent problems. As explained in that text, “The generation of images 4 is able, in many cases, to generate a representation of a public figure based only on an indication of text. In this launch, We will not block the ability to generate adult public figuresbut we will implement the same safeguards that we have implemented for the edition of images of photorealist loads of people. For example, this includes trying to block the generation of photorealist images of public figures that are minor and material that violates our policies related to violence, images that encourage hate, instructions for illegal activities, erotic content and other areas. Public characters who wish not to generate their image can choose not to participate. “ A similar approach to Grok’s. Openai’s philosophy is now The same line than the one Grok 3 raised With its generation of images months ago. Censorship disappeared and it was feasible to generate any type of Deepfake even with public characters. Openai responsible highlight how the approach here is different from the series of Dall-E models, and that “opens the possibility of useful and beneficial uses in areas such as educational, historical, satirical and political discourse.” Even so, they add, they will continue “monitoring the use of this capacity, evaluating our policies, and we will adjust them if necessary”, which makes it clear that a misuse of these options could lead OpenAi to re -apply censorship mechanisms. Why now. Openai’s decision is striking, but logical. Grok 3, which was a little widespread model, has achieved some popularity thanks to that “politically incorrect” approach to its AI model. After all, AI models are tools, and can be used for both good and evil, like any other tool. Controlling the bad uses is extremely difficult and expensive, and here OpenAi leaves the ball on the roof of the users. The Deepfake generation With famous characters in Grok 3, he unleashed a flood of memes and contents of all kinds with those celebrities, but it seems that in recent times “we have become accustomed” to having that capacity and apparently the diffusion of these images has relaxed. The initial controversy has blurred, and Openai probably knows that this will help further boost the use of chatgpt and perhaps to harm his rival, Grok 3. The quality of the photorealist images rises of level in this new image generator integrated in chatgpt with GPT-4O. Source: OpenAi. But they don’t want to put the leg. Generating images is wonderful, but it can also end up being a problem for the models that put the leg. It happened to Google with Gemini, which ended up generating controversial images of Black Nazis soldiers in which the desire to be inclusive ended up posing reputational problems and Important economic. He Addendum to the official announcement by OpenAI Let it clear They have taken special care to generate “safe” images. The model censures much less, but can continue to be refused to generate certain types of images that, for example, avoid control of CSAM materials (sexual Child abuse material). The evolution of Dall-e. In January 2021, nobody probably paid too much attention to a news that we published in Xataka. An unknown OpenAi Dall-e presented at that timea model capable of generating images from a text prompt. In April 2022 Dall-e 2 would arrivebut in reality we all “click” in June of that year, when it was launched Dall-e 2 mini And we could all try that. And it was impressive. Images in Chatgpt. The new OpenAi in this area is not a theoretical Dall-E 4. instead what the company has presented is the so-called image generation integrated in its model GPT-4O. The announcement is important because it allows you to generate images directly within Chatgpt, but also do it with a quality clearly superior to that offered by Dall-E. Until the text generates well. One of the outstanding characteristics of this model is its ability to render text precisely: if you ask for an image with a certain text, that text will appear clearly, while in other models the text may appear distorted or illegible. According to Openai, the model takes advantage of “the inherent knowledge base of 4”. And more striking options. In Openai they also highlight how we also have the ability to generate in “multiturn”, that is, refine images from the previous ones. We can polish them or add new elements to the images with new PROMPTS. The understanding of the context, the quality of the photorealistic images – such as Marx – or even the generation of diagrams and graphs are other remarkable options of this image generation model. Activated water marks. There is an additional element of the model: all the images generated They include C2PA metadatathat is: they contain invisible “water marks” that allow to identify all these images as generated by GPT-4O. In Openai they even emphasize that they have created an internal search tool that allows us to use the technical attributes of the generations to verify if that content comes from their model. But it is still imperfect. The company’s own ones notice: the images may contain bulk and hallucinar errors, and the generation of text, especially with multi -mounted support, can end up offering meaningless texts. Who can use it. The image generation in 4o has already begun its deployment for users of Chatgpt plusPro, Team and even free accounts, and will soon reach Enterprise … Read more