will no longer pause dangerous models if the competition releases them first

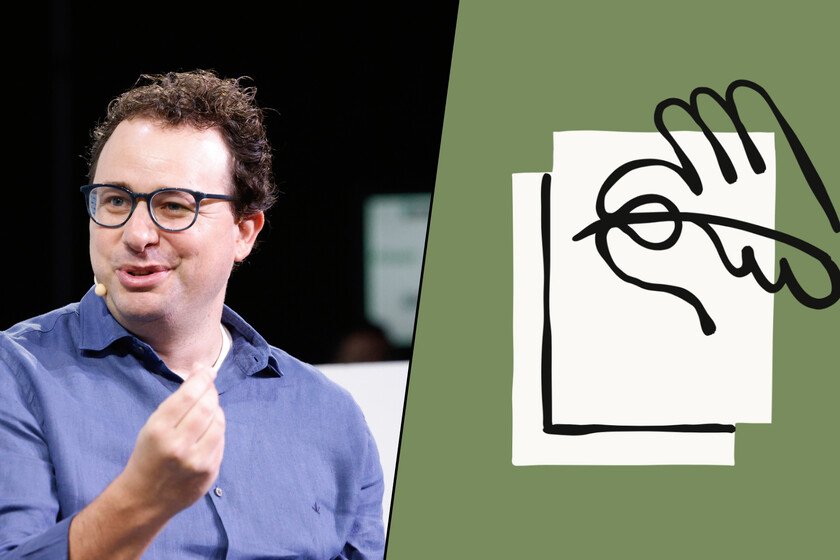

Anthropic is in the middle of an important issue with the Pentagon in the United States that may end up shaping the future of the company. Founded with security as its reason for being, it has just rewritten the rules that defined it. And his “Responsible Scaling Policy“, the document that established when to stop the development of a model that is too dangerous, has evolved into a mere roadmap with flexible objectives. And this change is much more important than it seems. Not only for Anthropic, but for the rest of the industry. Let’s get to it. What exactly has changed. Until now, Anthropic policy stated that the company would pause training or delay the launch of a model if its capabilities exceeded the speed at which sufficient safeguards could be developed. That is to say: if the model was too powerful to be controlled safely, it was stopped. This is over. And it is that the new policy removes that automatic braking mechanism and replaces it with a series of public commitments, along with regular third-party audited risk reports. The change was confirmed by the company itself in an official statement. Why have they done it? The company gives two main reasons. The first is the competitive environment: OpenAI, Google and xAI advance without those types of restrictions. “We didn’t feel it made sense to make unilateral commitments if competitors are moving full speed ahead,” counted Jared Kaplan, chief scientific officer at Anthropic, told Time. The second, as it could not be otherwise, is political: Washington has turned its back on AI regulationand Anthropic acknowledges on its blog that the current anti-regulatory climate makes its own safeguards asymmetrical with respect to the rest of the sector. Paradox. From Anthropic’s point of view, it is not a renunciation of security, but a decision made based on it. Their reasoning: if the actors who are more responsible (they fall into this bag, logically) stop while the less careful ones move forward, the net result is “a less safe world.” The logic has a certain coherence, but it also means accepting that security depends on what the competition does. And that is a very dangerous game. Context. Anthropic was founded by former OpenAI executives, including Dario Amodei, who left that company precisely because they believed that it did not pay enough attention to the risks of AI. The new policy comes at a time when several security researchers have left the company. Just like shared Wall Street Journal, one of them, Mrinank Sharma, wrote a letter to his colleagues this month saying that “the world is in danger” because of AI, before announcing his departure. In fact, according to sources close to the media, his departure would be partly related to this decision. What’s happening with the Pentagon?. The announcement comes in full tension with the Pentagon. US Secretary of Defense Pete Hegseth gave Anthropic an ultimatum the same Tuesday that the policy change was made public: modifying its red lines on the use of Claude or risk losing a $200 million contract with the Department of Defense. Anthropic has made it clear that both issues are independent, but the temporal coincidence has not gone unnoticed. What remains of the security policy. It is not a total abandonment. Anthropic remains committed to delaying the development or deployment of “highly capable” models in specific circumstances, and is committed to publishing detailed, externally verified risk reports every three to six months. The company also now separates its own internal guidelines from its recommendations for the rest of the sector, implicitly acknowledging that the commitment to a “race to the top”, which other companies are adopting, has not worked as expected. Cover image | Wikimedia Commons and Anthropic In Xataka | The US has a message for AI companies: if necessary, that AI belongs to the State