Sam Altman is laying the foundations for post-humanism as the philosophical current of the AI era. It’s not good news

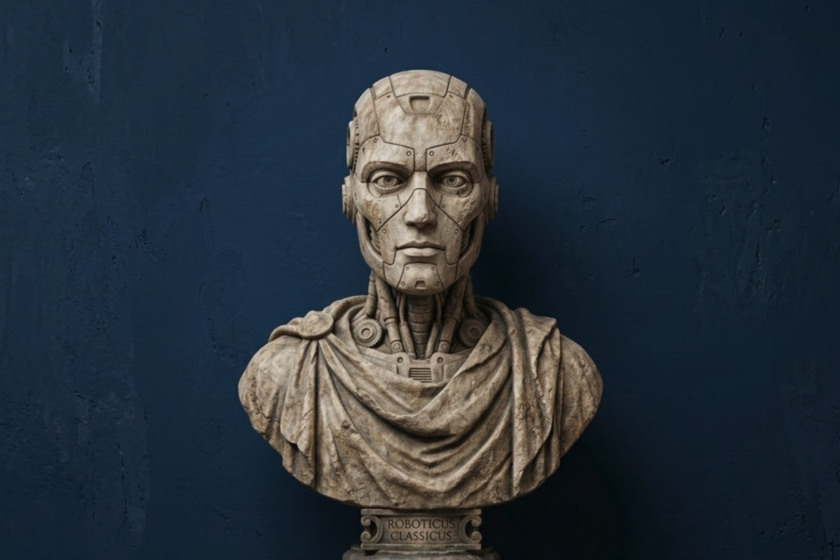

“But it also takes a lot of energy to train a human. It takes about 20 years of life and all the food you consume during that time to become intelligent.” These two sentences were enough delivered at the India-AI Impact Summit 2026to set the networks on fire. But Sam Altman didn’t stop there. “Not only that, it took the widespread evolution of the 100 billion people who have lived and who learned not to be eaten by predators and to understand science and so on to create you,” continuous. Therefore, the criticism about “how much energy is needed to train an AI model” They are extremely unfair. And it’s curious. The most “unpopular” technology in history… Not because it is not understandable (or even because it is not reasonable). It’s funny because Altman and the rest of the AI bigwigs don’t seem to realize that they are making every effort to make AI extremely successful. unpopular among the population. Maybe it’s nothing new. Maybe it’s something similar to what happened with fabric making machine salesmen in the midst of the industrial revolution. Maybe it’s something similar to what motivated movements like that of the Luddites and the reason why dozens of historians rewrote their history as that of poor technophobes. What has changed is that we are now broadcasting it to the entire world — and live and direct. And very insistently. Although the discourse they use to ‘sell’ their technology to investors, technical elites and politicians around the world can only be understood at a public level as a very sophisticated way of saying: ‘human things get in the way.’ Or not so sophisticated, of course. …that is finding its “public” Team Mirai Over the last few years, in fact, the process has become less and less subtle and more blatant. It is not something that is limited to AI companiesbut it is an increasingly clear phenomenon: people speaking to a convinced hyperminority while alienating the vast social majority. And artificial intelligence is the tip of the spear. And it wouldn’t be a problem if there weren’t something else: the current great technological battle is not only technical, it is ideological, philosophical and of values. For the social changes they hope to be successful, it is necessary to move the ‘Overton window’ as quickly as possible. And it’s working. The best example is Japan: in the last election, Team Mirai ran. As Antonio Ortiz explainedis “a new Japanese party founded by engineers” with “a fairly accelerationist program: government chatbots and databases for transparency of donations and to make politics ‘faster’, reduce paperwork and achieve an increase in productivity to compensate for the labor shortage.” Well, those people just got 11 seats and 7% of the votes. In a way, two apparently contradictory processes are two legs of the same phenomenon: the discourse becomes more explicit as the population becomes more related. And changing the world is also (and above all) changing ideas. We tend to have a softened vision of social changes. However, there are several psychosocial processes that are usually key for these to be carried out: delegitimization (“what ruled until now no longer deserves obedience”), demonization (“those who hold these ideas are evil”) and dehumanization (“they are not human, moral norms do not apply”). You don’t always get to the last step, but some degree of moral disconnection it is necessary. And the artificial intelligence revolution (and all the tensions it brings) continues to show similar signs: for years, accelerationist and posthumanist groups have been ‘operating’ in the shadow of the great social and political discourses. Now, however, they face it: as the AGI approaches, everything we thought we knew (on a social, economic or institutional level) is useless. Or so they try to make us believe. And the best example is that of Altman: the CEO of OpenAI does not have to declare himself a posthumanist to lay the rhetorical tiles through which these discourses will travel: when you convert the human into energy cost comparable to an AI model, you are lowering the bar to justify “anything” in the name of efficiency But what exactly is all this talk about posthumanisms and accelerationists? Although they are two different philosophical traditions (posthumanism questions classical humanism and lays the foundations for its improvement, while accelerationism is a family of ideologies that propose accelerating certain dynamics – technological or capitalist to provoke radical social change), the truth is that in recent years they have ended up coming together. And, beyond that, they are providing the mental framework that allows certain decisions to be made that, in other scenarios, would not be socially acceptable. When the human being ceases to be the ideological ‘center’ of the system, acceleration becomes the great political principle and the AGI becomes the utopian destiny of a post-scarcity society (the modern equivalent of the Christian heaven or the Marxist classless society), everything that opposes this — rightly or wrongly — will become old, outdated or outdated. Altman’s statements in India are not an accident: they are part of the delegitimization of the current system of values that the next revolution needs and, as we see, is already underway. Image | Xataka In Xataka | “A place of joy with pain”: the phrase that summarizes the Aztec philosophy to be happier in this life