some Amazon employees use AI just to inflate their token metrics

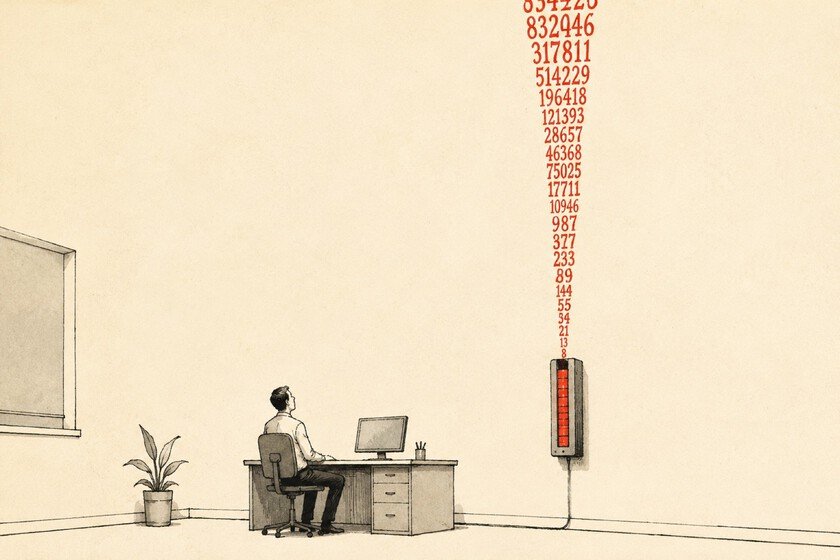

He tokenmaxxing now has its most documented case. Some Amazon employees have been using MeshClaw for weeksan internal AI agent tool, to automate unnecessary tasks and thus inflate your consumption of tokens in the internal markers that the company has implemented. This is not the first time something like this has happened in Silicon Valley: Meta had its own leaderboard tokenswith a winner who took the title of Legend Token. And similar patterns have been documented at Microsoft. But the Amazon case adds a detail that makes it more striking: the tool used to cheat is the same one that Amazon has officially deployed to make its engineers work better. Why is it important. Amazon requires more than 80% of its developers to use AI tools each week and measures compliance using data consumption markers. LLMs. The company has said those statistics will not be used in performance reviews. Several employees have responded with variations of the same phrase: managers are looking at it. “When you track usage, you create perverse incentives and there are people who are very competitive with this,” one of them told the Financial Times. Yes, but. There is a more generous reading. Forcing a large organization to come into contact with new tools has a certain logic: if you force enough people to use them, someone eventually finds a really useful use for them. The problem is that that only works if there is real exploration. An employee who delegates to an agent the task of summarizing emails that no one will read is not learning anything, he is just inflating his metrics. The big question. amazon has committed 200 billion in AI infrastructure whose demand, in theory, is absorbed as it is deployed. If a part of that internal consumption is tokenmaxxing Purely, the figures that justify these requests are less reliable than they seem. The distinction between real adoption and inflated consumption matters because the former generates lasting demand while the latter disappears as soon as incentives change. Amazon has already restricted public access to device usage statistics. When the marker is no longer visible, the behavior it encouraged also changes. Go deeper. The Goodhart’s law He has been explaining this for fifty years: when a measure becomes an objective, it is no longer a good measure. Amazon hasn’t built a system to know if its engineers are using AI well. You have built a scoreboard, and the scoreboards are played. In Xataka | If the question is whether using ChatGPT or Claude in English is more efficient and saves tokens, the answer is: yes Featured image | Xataka