In the midst of the RAM crisis, Intel counterattacks with ZAM. It is the chip to break South Korean hegemony

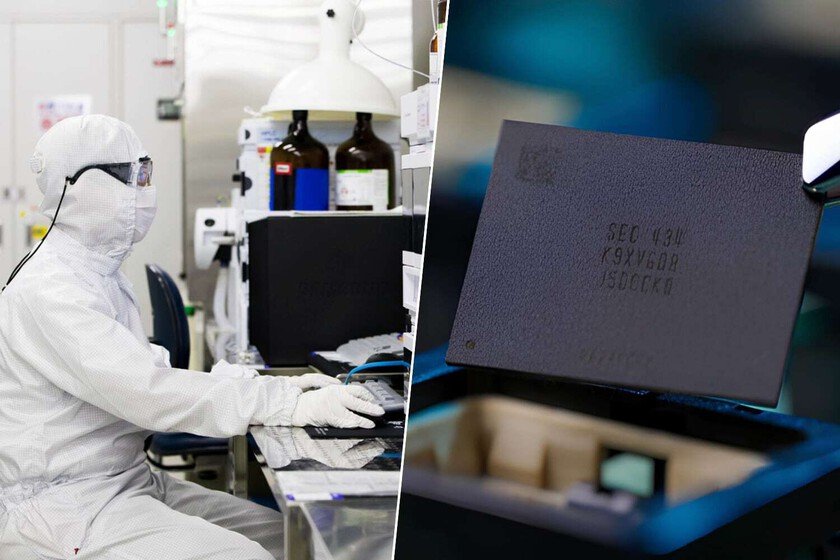

Few would have guessed not so many years ago the Intel transformation. The company that will dominate consumer processors and servers for generations has been through a real ordeal through the desert under the rule of AMD. However, they have returned for their rights and not only –rescue through– have positioned themselves to be the great American foundry, but are looking to take a bite out of the gigantic South Korean RAM memory industry thanks to its new memory: ZAM memory. And its weapon is three-dimensionality. Z for ‘zolution’. Do you remember when, in math class, you drew the first cube? The X axis is east-west. The Y axis is north-south. What the square needed to become a cube is the Z axis, the one up and down. That’s what engineers SAIMEMORYthe company resulting from the collaboration between the Japanese SoftBank and Intel, have applied traditional DRAM memory with a single objective: to assault the enormous market for high-bandwidth memory, or HBMwhich dominates data centers. Puff pastry. A few months ago we told you that the two companies They had embarked on a joint path to stand up to the dominance of Samsung, SK Hynix and Micron in the creation of high-performance memory. lHBM memory is preferred for data centers because it has a beastly bandwidth that allows a greater number of simultaneous operations. It’s like a huge highway. However, it has limitations: it is expensive to produce, requires a lot of energy, and gets hot enough to require expensive dissipation systems. Conventional DRAM memory was not an alternative, but Intel and SoftBank began to ‘play around’ with stacked DRAM memory. It is like a puff of RAM memory (simplifying things a lot), whose main limitation came when connecting each of those thin layers of memory so that the final product had the same capabilities as that highway that is HBM memory. ZAM. After a few months of research, a few days ago at the Intel Connection in Japan, SAIMEMORY and Intel presented the ZAM prototype. According to the companiesa ZAM module can have a capacity of up to 512 GB, it is easy to produce because it consists of designing vertically stacked chips and most importantly: it can reduce energy consumption by 40% to 50% compared to conventional HBMs. If HBMs are expensive and take time to produce, ZAMs are cheaper, can be the solution to alleviate restrictions in the supply chain and, in addition, would lower the energy consumption of data centers (which is one of the problems they have), and are also easier to cool. At the moment, the company’s research points to a theoretical limit of 20 layers, but current designs move around 16 layers, so performance may be better if this current limitation can be overcome. Real alternative. Intel’s ambition is total, since they point out that their DRAM module joining technology allows them to offer two to three times the capacity of HBM modules while being up to 60% cheaper to produce. It all seems like a plus and doesn’t seem like bad technology when established giants in HBM memory creation like Samsung are also researching how to overcome the limitations of connections in stacked DRAM memory. The prototype | Photo by PCWatch Ambition. And, almost as important as the presentation of the ZAM prototype, is the alliance itself. Intel has been away from the memory market for many years. He tried it in the 80s and, again, years later with his Optane technology -that died miserably without making the slightest gap in the market. On the other hand, SoftBank represents a Japan that had the lead in this sector in the 1980s, but was overshadowed by emerging South Korean companies. In fact, Intel’s memories were eaten by the Japanese… and the Japanese by the South Koreans. SAIMEMORY has behind it not only those sharks, but other Japanese companies such as Fujitsu, Shinko Electric Industries, PowerChip Semiconductor Manufacturing or the University of Tokyo. And if ZAM memory works on a commercial level, it will not only be good news to alleviate the memory production chains (perhaps this will also alleviate the domestic market totally destroyed for the data center needs), but will mark the birth of a new and ambitious player who seeks to break the hegemony of the trident he currently leads. We will see it, of course, in a few years, since SAIMEMORY plans complete prototypes in fiscal year 2027 and begin commercialization in 2029. Image | Samsung, Maxence Pira In Xataka | The CEO of Nothing is clear that we do not need a high-end mobile phone every year. A mix of RAM crisis and common sense