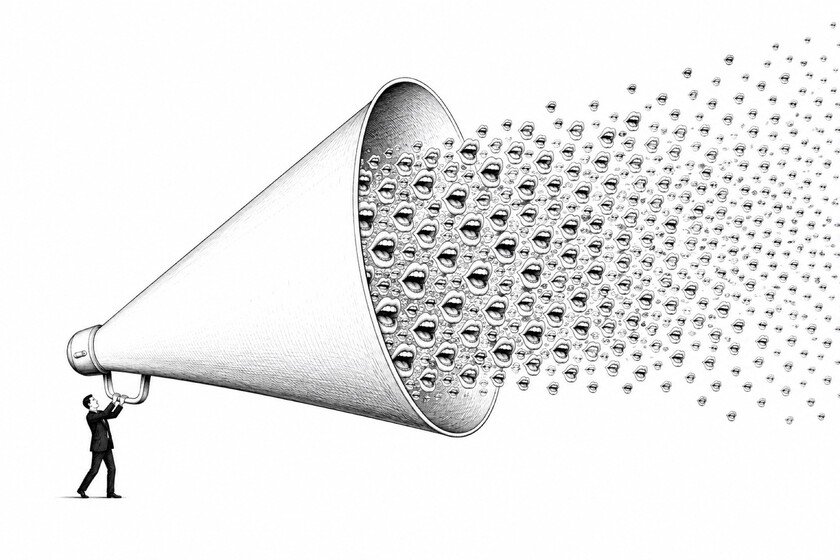

AI promised to decentralize knowledge. It’s doing exactly the opposite.

The entire AI debate revolves around the same thing: employment, deepfakes, copyright, automation. They are reasonable questions. But there is one that matters on a higher level: who controls what the AI considers to be the truth? Because AI, which seemed like the great decentralizer, is actually the most centralizing technology since the printing press. The backdrop. When the printing press arrived, Protestant reformers saw it as the end of the papal monopoly on knowledge: if anyone could read the Bible, the pope lost his authority. And they were partly right. But the printing press also standardized English as the dominant language, liquidated regional dialects and, in the process, made the modern State possible: without cheap and reproducible text there are no uniform laws or large-scale tax collection. What seemed like a liberation was also a centralization. Only it took us two centuries to realize it. Between the lines. With AI the process goes much faster. When Google shows a response from Gemini in AI Overviewshalf of the users it doesn’t click on anything anymore and 26% close the search directly. Searches without clicks have gone from 54% to 72%. The open web, with all its diversity and chaos, is losing users to a single synthesized answer, such as journalist Jerusalem Demsas has analyzed in The Argument. And that response is not neutral. The LLMs They train mainly with large Anglophone newspapers, Wikipedia and academic texts. Local or minority sources hardly exist in the corpus. And during fine-tuning the models are calibrated to align with expert consensus and avoid awkward positions. It is not a mirror of human diversity but a photo of what appears at the center of it. Yes, but. It can be argued that users can ask the AI to defend any position and that diversity is in use if not in production. It is an argument that has some reason. But the printing press also produced very varied content, what was centralized was who set the standards. Here the standards are set by a corpus Made in Silicon Valley for Western chatbots. The case of Grok It is quite illustrative. When Musk tried to move the model away from the progressive consensus, the system began generating anti-Semitic content within days. He had to turn back. The values of an LLM are not in a superficial layer that can be retouched, they are stuck in the corpus from the beginning. The big question. ChatGPT is getting closer to the billion weekly users. Elite models are developed by a few companies: OpenAI, Anthropic and Google, mainly. We can add xAI. What comes next comes from China: DeepSeek, Moonshot, Alibaba… The researchers of these elite models, to a large extent, have studied in the same places, have worked in the same offices and share, in general terms, the same cultural references. The risk of this decentralization is not that AI lies more than Google. The risk is that when AI makes mistakes, it does so towards the center, not towards conspiracism. The risk is that this center is being set, without anyone having decided, by a few people in San Francisco. In Xataka | As far as we know, the agency that supervises AI in Spain is not supervising anything. What it does have is an Ideas Laboratory Featured image | Xataka