We knew that generative artificial intelligence was a monster that was forcing companies to make large investments in energybut Google’s first detailed analysis has put the figures for the first time on the table.

We go to the point. According to him Google Technical Reportbased on data from May 2025, an average text consultation to Gemini consumes 0.24 Electricity watts. To put it in context, it is something like watching nine seconds of television with a conventional TV of 100 W.

Water consumption, which is still necessary to refrigerate serversis 0.26 milliliters per consultation; The equivalent of five drops of water. The carbon footprint of the entire inference process, according to the report, is 0.03 grams of equivalent.

Wrong estimates. Just a year ago, third party analysis They estimated that a single consultation of AI in the Google search engine, such as those of AI overViews, could consume about 3 Wh, ten times more than a traditional search. This led to calculations as striking as the deployment of AI in the search engine would consume enough energy to load seven electric cars per second.

With Google’s official data in hand, we see that this estimate was wrong by a 12.5 factor. The new software techniques (such as speculative decoding) and the most efficient models architectures (such as the Mixture-OF-Experts paradigm) have completely changed the panorama.

Inference, no training. These figures, the most concrete published to date by the company, only take into account Gemini’s consumption by inferring user response. The expensive process of training the great language models that feed these tools remains a mystery, but Google is justified by saying that the massive adoption of generative AI, integrated even in its search engine, has put the focus on inference.

In this direct relationship with the user it is also where greater efficiency jumps are getting large technological companies. Google says that, in the last 12 months, energy consumption has divided by 33 and by 44 the carbon footprint of each consultation to Gemini. Much of this jump has to do, not only with more efficient models, but with the improvement of AI accelerators (Tpus and Gpus), a hardware that Google develops internally.

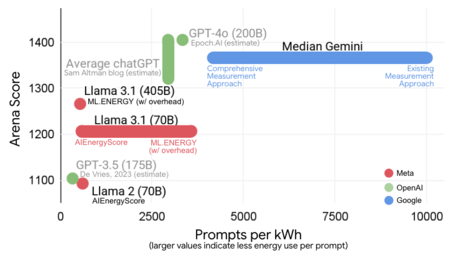

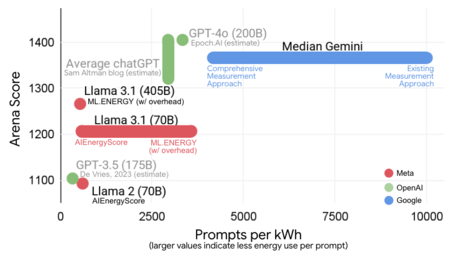

The amount of “prompts” per kWh that process the different models of AI

Less than Netflix. Google is not alone in this new era of transparency. Sam Altman, CEO of Openai, also shed some light on the consumption of chatgpt. In one June 2025 publicationAltman said that an average consultation to ChatgPT consumes approximately 0.34 or energy Wh and about 0.3 ml of water.

The energy figure is slightly higher than Gemini’s, although it is a difficult comparison. Altman did not give details of his methodology, so we do not know if his calculation includes all the factors that Google has considered (such as electrical consumption in refrigeration and in “idle” machines; that is, inactive, but ready for rapid consumption peaks).

Both companies have been compared to television: “An hour of Netflix consumes 100 times more electricity than Chatgpt,” says an official OpenAI slide. The same that says that the total impact of AI on US carbon emissions would be around 0.5%.

Images | Google

GIPHY App Key not set. Please check settings