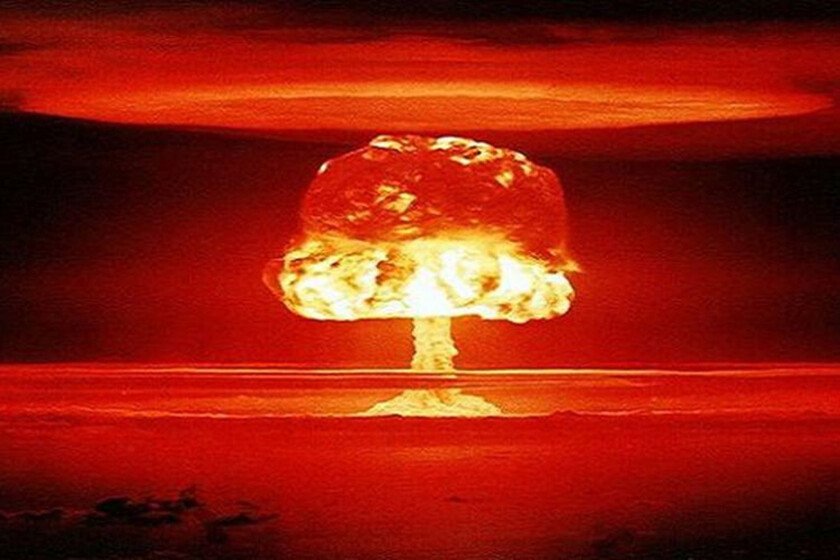

Anthropic has refused to bow to pressure from the Pentagon. Its co-founder and CEO, Dario Amodei, has just published a statement in which they make it clear that they are not willing to break their ethical principles. No massive espionage with AI, no development of lethal autonomous weapons with its models. And that reminds us of a terrible case: the one with the atomic bomb.

From hero to villain. J. Robert Oppenheimer went from being the “father of the atomic bomb” and a national hero to become in an outcast. His sin was not betrayal, but his moral clarity. After witness the horror of Hiroshima and Nagasaki, Oppenheimer desperately tried to stop the atomic escalation and the development of the hydrogen bomb.

Either you are with us, or against us. The United States, which had praised him in the past, took advantage of his former political affiliations and stripped him of all his privileges and influence. This demonstrated how the US government simply decided that scientific knowledge was state property and that any researcher who tried to propose ethical limits to their own projects would be treated as an enemy of the country.

History is threatening to repeat itself these days.

From Oppenheimer to Anthropic. He is doing it with a protagonist that is still there—the US Government—and another that is changing: the one who now defends the ethics of a scientific-technological project is not Oppenheimer, but Dario Amodei, CEO of Anthropic.

Claude is increasingly vital in the US Government. Your company is between a rock and a hard place these days. Anthropic managed to make its model Claude become the pretty girl of the US Government. The ability of this AI has proven to be so remarkable that it was apparently used to plan the arrest of the former president of VenezuelaNicolás Maduro.

red lines. But so that the Pentagon could use Claude, Anthropic imposed certain red lines. No use for mass surveillance of US citizens, and no use for the development of lethal autonomous weapons. And the Pentagon has ended up not liking those red lines, so they want to eliminate them and use Claude as they please as long as, they say, the Constitution and American laws are respected.

The Pentagon wants AI without restrictions. That has ended up causing an enormously tense situation these days. The Pentagon threatened to punish Anthropic if it did not give in to its demands, and those threats from the Department of Defense have not been subtle at all. In fact, they have suggested that they could label Anthropic as a company that is “a supply chain risk,” a black label typically reserved for companies in rival countries like China or Russia.

Contradiction. Dario Amodei himself explained in an entry on the company’s official blog that those two threats were self-exclusive: “These last two threats are inherently contradictory: one labels us as a security risk; the other labels Claude as essential to national security.”

Can AI be nationalized? It’s a disturbing irony: the same government that considers Claude an essential tool for national security is willing to label his creators a public threat if they don’t hand over the keys to the kingdom and their AI. What the Department of Defense and the Pentagon want is to basically “nationalize” the AI technology developed by Anthropic and appropriate it as they already did with the technology that gave rise to the atomic bomb. We know how that ended.

Anthropic refuses to give in. The danger is enormous in both sections: mass surveillance, rather than defending democracy, can dynamite it from within, and the NSA scandal is a good example. But even more worrying is the Pentagon’s intention to use this AI to develop lethal autonomous weapons. Amodei insisted on this point, indicating that “The foundational models of AI They’re just not reliable enough. to power fully autonomous weapons. “We will not knowingly provide a product that puts American warfighters and civilians at risk.”

Amodei even offers the Department of War/Defense help in the “transition to another provider” of AI models, but at the moment it is not clear which path the US government will take.

Oppenheimer Moment. If the Pentagon finally execute his threat and ban Anthropic, the message for the industry will be chilling. In the age of AI there are no conscientious objectors: if a company develops a technological and strategic advantage at a military level, that company is at the mercy of the State. It is a new and terrifying “Oppenheimer Moment” that conditions the future not only of Anthropic, but of the development of AI models itself.

In Xataka | “The world is in danger”: Anthropic’s security manager leaves the company to write poetry

GIPHY App Key not set. Please check settings