A week ago we were just saying that “A dead king, a king“: Anthropic passage to pure ostracism after being considered a “risk to the supply chain” of the United States practically overlapped with the announcement of the US Defense Administration agreement with OpenAI in record time. Behind the scenes: the reasons for the no from the company led by Dario Amodei and the unknown of the terms of that agreement that installs ChatGPT on the Pentagon computers. A few days later, Caitlin Kalinowski says goodbye at his position at OpenAI, citing the military use of artificial intelligence as the reason.

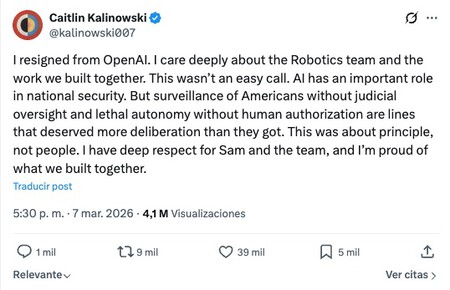

The resignation. Caitlin Kalinowski, head of the OpenAI robotics team since November 2024, announced her departure from the company a few hours ago in publications from X and from LinkedIn. He makes it clear that his decision is about principles and not people and expresses respect for Sam Altman and the team. In his brief statement there are two lines that, in his opinion, the company did not think about enough internally:

- The surveillance of American citizens without judicial supervision.

- Autonomous weapons capable of firing without human supervision.

Context. The resignation occurs in the midst of Anthropic’s departure from the Pentagon (the transition will last six months), the entry of OpenAI and in the midst of a debate about how far AI companies should go in their collaboration with the US military establishment:

- Anthropic stood before the Pentagon drawing strict lines on domestic surveillance and autonomous weapons.

- OpenAI reached an agreement with the Department of Defense to deploy its models on a classified government network in a move that has been interpreted as opportunistic. According to the company led by Altman, the agreement excludes domestic surveillance and autonomous weapons, but the damage to its reputation had already been done: thousands of people uninstalled ChatGPT by way of cancellation.

Why it is important. The goodbye of Caitlin Kalinowski is the first public and nominative resignation from a senior position at OpenAI motivated by ethical disagreements over the military use of AI explicitly. And this sets a precedent in the industry insofar as it exposes the internal fracture in the most influential company in the sector, placing OpenAI in a delicate situation before those who use its tools, its staff and also before society.

And finally, it makes more clear than ever the need to legislate on artificial intelligence and its civil and military uses. Maybe Europe is behind in the AI battlebut a long time ago he set about the arduous task of establish a regulatory framework.

Which Kalinowski does not say. In the comments of her post on

Kalinowski does not say it clearly, but when an agreement of this magnitude has already been signed and its CEO makes it publicthere is no room for much maneuver from within: resigning with a public statement like yours is one of the few pressure maneuvers left to exert.

Consequences. For OpenAI, the pressure is growing and it faces more departures and more cancellations if it does not clearly show what its red lines are in a credible and verifiable way: the militarization of AI is something we are experiencing in real time. For the AI industry, it is more fuel on the fire of the self-regulation debate. And Anthropic gains reputation, although in the short term it has lost an important agreement and its new status may put its existence in check.

In Xataka | The US has decided to shoot itself in the foot and destroy one of the best AI companies in the country

Cover | Caitlin Kalinowski

GIPHY App Key not set. Please check settings