what it is and how to use it to create artificial intelligence images from your photos

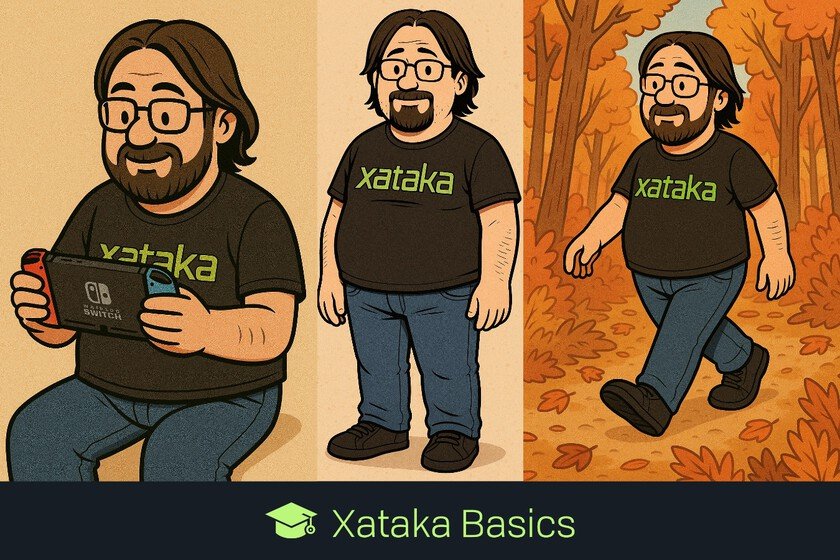

Let’s explain to you what it is and how you can use it ChatGPT Imagesthe new section of the artificial intelligence Designed to help you create and edit photos and images. This is a forceful response to Free Nano Banana of Geminiso amazing that it already represents a new evolutionary leap in images created by AI. Since the launch of Nano Banana on Gemini, Google had managed to compete head to head with ChatGPT in creating images from photographs. Gemini was able to use your face and be recognized, something that OpenAI’s AI could not do… until now. We’re going to start by explaining what this new feature is and what features it has to differentiate it from the rest, because there are some very interesting and innovative things. Then, at the end we will summarize how you can use it to create images with different styles from your photos. What is ChatGPT Images ChatGPT Images a new section dedicated to image creation within ChatGPT. This artificial intelligence chat has updated and improved its image creator from photos so much that it has decided to give it an exclusive section. Just as ChatGPT’s normal chat allows you to create images from scratch or from your photographs, This section is exclusive for creating images from photos. Come on, the idea is that when you want to do this, instead of getting complicated by asking ChatGPT, you can enter the section and speed up the process. This is so because In the Images section you will have several ideas for designs and tools to edit your photos. Thus, it will be as easy as clicking on one of your designs, choosing the photo and that’s it, ChatGPT will do the rest. With this, eliminates the need to know how to write a good promptand the process is simpler and more visual for inexperienced people. When you choose the design and upload the photo, it will automatically be sent to ChatGPT with a pre-generated prompt that you can see. Showing you the prompt changes everything This is important, because being able to see the prompt that ChatGPT uses in its presetyou will also be able to copy and paste it to modify it, or even use it in Gemini or some other competing tool. Thus, ChatGPT Images is also not only a good testing ground, but by offering you several prompts it gives you the basis to later generate a much more personalized image from them. You will also know how the image editing prompt works in a more transparent way, and you will be able to use things from both prompts to create a completely unique one. Until now, when you were faced with creating an image from a photo you had to do it from scratch, composing the prompt on your own or searching the Internet to find them. That’s why showing it to you changes everything, because it makes anyone without knowledge can create images very elegant with AI. To all this we must add an interface that also simplifies everything, and in which ideas are shown to you with an image of the resultso that if you see something you like, you just have to click and choose the photo. How to use ChatGPT Images The first thing you have to do is enter the ChatGPT website or application on your device. Here in the side menu Click on the section Images that will appear just below the search options. This will take you to the main screen of the section Images. In it, at the top you have a search field to write a prompt manually, and below you have pre-generated styles of images and ideas of styles or other things you can do. When you choose one of the designs or ideas, you will go to a screen where you simply have to choose the photo you want to use. You can choose any of the last ones that you have used, or click on Choose a new photo to manually upload another photo. And that’s it. When you do so, a chat with ChatGPT will open that includes the photo and the prompt created to generate the type of image you have chosen. In a few minutes you will have the result. You will be able to copy this prompt to reuse it with other images in the chat itself and even modify it to your liking. In Xataka Basics | How to create a character in ChatGPT and Gemini to use it in all the images you make with artificial intelligence