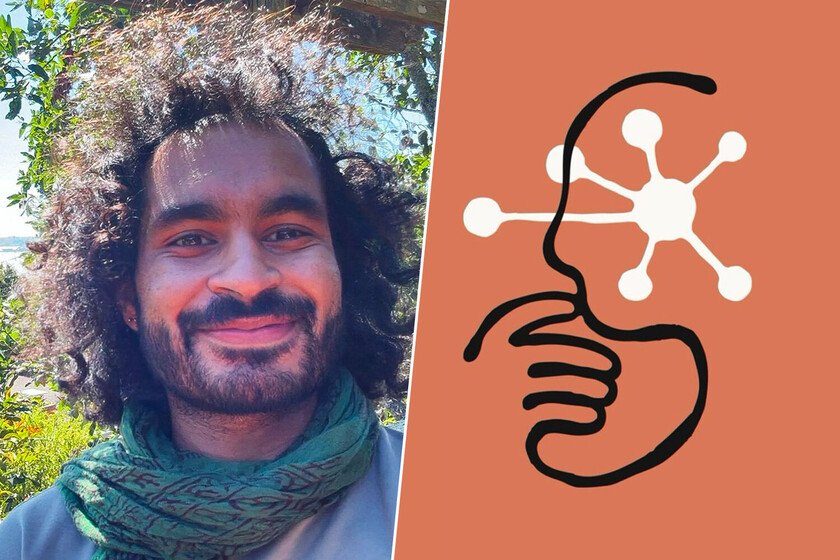

Anthropic’s security manager leaves the company to write poetry

In a movement more typical of “nihilistic penguin“that the head of security for one of the main protagonists in the development of AI, Mrinank Sharma, head of artificial intelligence security at Anthropic, has announced his resignation with a public letter in your X profile and he will dedicate his life to writing poetry. In his statement, Sharma not only explained why he is leaving the company that develops the models of Claudebut instead described the current state of AI development, with language that mixes alarm with personal reflection. “The world is in danger,” said the former director of Anthropic. The context: who he is and what he did at Anthropic. Mrinank Sharma headed the Safeguards Research Team from Anthropic, a research group focused on studying the risks associated with AI systems. Within Anthropic, Sharma’s work included developing defenses against risks such as AI-assisted bioterrorism and studying phenomena such as sycophancy (the tendency of AI models to user adulation), as well as investigate how AI can influence human perception and change cultural behaviors. He leaves, but leaves a message. The almost cryptic letter that Sharma published in X It quickly went viral due to the messages it contained. In it, he expressed his concerns in a tone that transcends the technical. One of the quotes that has attracted the most attention: “The world is in danger. And not only because of AI, or biological weapons, but because of a series of interconnected crises that are developing at this very moment.” Beyond the almost apocalyptic literalism, Sharma warned that humanity was approaching a critical point in which the development of AI was facing ethical dilemmas for those who develop it “our wisdom must grow at the same rate as our ability to affect the world, otherwise we will face the consequences.” Work to stay out of work. Sharma is not the only one who faces this ethical dilemma. According to sources of The Telegraphother Anthropic employees have expressed concern about the huge evolutionary leap in the latest AI models. “I feel like I come to work every day to stay out of work“one of the employees acknowledged to the British media. In a way this is true, since these employees are working on the development of a technology that, in all likelihood, change nature of his work, and that of millions of peoplea few years away. Is that good or bad? A first reading of the letter leaves the feeling that these workers are developing the weapon that will destroy humanity. However, a reading between the lines leaves Anthropic in a pioneering situation compared to its rivals from OpenAI, Microsoft or xAI: they are achieving advance at a pace which overwhelms even its developers. A sensation that does not seem to occur in the templates of other companies. Could it be that their models are not at that point of evolution? “Throughout my time here, I have seen repeatedly how difficult it is to allow our values to guide our actions. We constantly face pressure to let go of what matters most,” Sharma wrote. The poetic turn. In addition to reflecting on the global risks he perceives, Sharma announced that his next professional step will be very different from the one he had until now. In his letter he mentioned his intention to devote time to what he called “the practice of courageous speech” through poetry. This change of lA for poetry has been interpreted as a sign of dissatisfaction with the pace and focus prevailing in the AI technology industry. Like Sharma, in recent weeks other key figures in Anthropic’s AI development have announced their resignation. Harsh Mehta and Behnam Neyshabur They also announced a few days ago that they were leaving the company. However, in these cases, the exit announcement was made and, immediately afterwards, a new AI project was announced. That is to say, far from the ethical postulates that Sharma proposed, his intention was more along the lines of digging into his own gold mine and not that of others. In Xataka | Daniela Amodei, co-founder of Anthropic: “studying humanities will be more important than ever” Image | mrinank sharmaAnthropic