Claude Mythos Preview it’s already here and it’s so good it’s scary. Literally. Anthropic has just introduced it to the public, but it has been done so cautiously that we won’t even be able to test it and it will only be available for certain technology partners. That’s frustrating and disturbing at the same time, but also reasonable.

So powerful that it scares. On February 24, 2026, Anthropic engineers were able to test their new artificial intelligence model for the first time, which they called Claude Mythos Preview. As soon as they did they realized one thing:

“demonstrated a dramatic leap in its cyber capabilities over previous models, including the ability to autonomously discover and exploit vulnerabilities zero-day in the main operating systems and web browsers on the market.

Threat to global cybersecurity. This finding made it clear to Anthropic officials that although this capability makes it very valuable for defensive purposes, it also poses clear risks if the model were offered globally. Thus, a cybercriminal could take advantage of it to find vulnerabilities in all types of systems and exploit them. A few hours ago the company developed this analysis of Mythos as a threat to cybersecurity in a post on his blogand for example highlighted how Mythos found a vulnerability (now corrected) that had been present in OpenBSD for 27 years, an operating system precisely recognized for its very strong security. There were more examples, and all of them made the conclusion clear:

Mythos is too powerful for ordinary mortals to use.

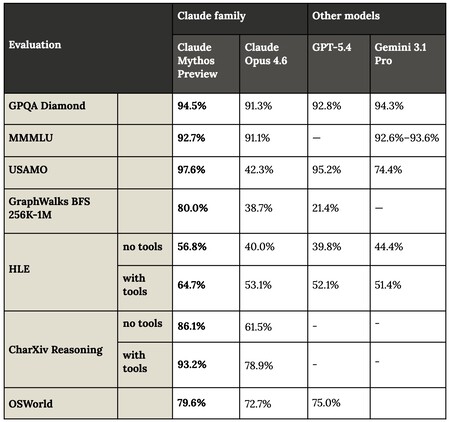

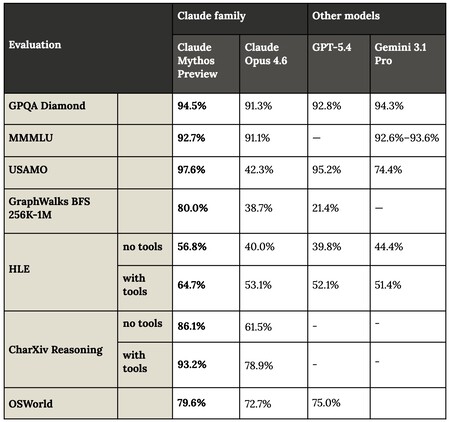

Superior in all benchmarks, and in some cases such as USAMO (mathematics), the jump is simply incredible. Source: Anthropic.

The best in history according to benchmarks. Anthropic has published a very in-depth report about this model with its “system card”. Among the data present is, for example, its performance in benchmarks, where it has swept GPT 5.4, Gemini 3.1 Pro and also Claude Ous 4.6, which until now was the best model in the world in almost all performance tests. Although in some cases the jump is not spectacular, in others such as USAMO —mathematical problem solving—Mythos practically achieves perfection.

He barely hallucinates… That system card also talks in detail about how Claude Mythos Preview has a drastically lower hallucination rate than Claude Opus 4.6 and earlier models. He is also capable of saying “I don’t know” if he does not have enough information to answer, something that reduces hallucinations due to overconfidence.

…but when it does, be careful. The paper warns of a new phenomenon: when the model fails in some complex tasks, the “hallucinations” are not obvious errors, but rather extremely subtle and well-argued technical failures. This is dangerous because the answer seems totally correct to experts, requiring very deep verification.

Glasswing Project. That power and capacity has meant that the model will only be available through a “defensive” program that they have called Glasswing Project and which will be exclusive to some Anthropic technology partners. Specifically AWS, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA and Palo Alto Networks. All of them will have the privilege (and responsibility) of having access to Claude Mythos Preview to identify vulnerabilities and exploits and correct them before bad actors can do so.

Mythos Preview “it’s just the beginning”. Although this model is the most capable that has been seen so far, at least according to the benchmarks and data presented by Anthropic, the company assures that “we see no reason to think that Mythos Preview is the point at which the cybersecurity capabilities of language models reach their peak.” They assure that they expect the models to continue improving in the coming months and years, although this new model is certainly on another level.

In Xataka | OpenAI and Anthropic have proposed the impossible: lose $85 billion in one year and survive

GIPHY App Key not set. Please check settings