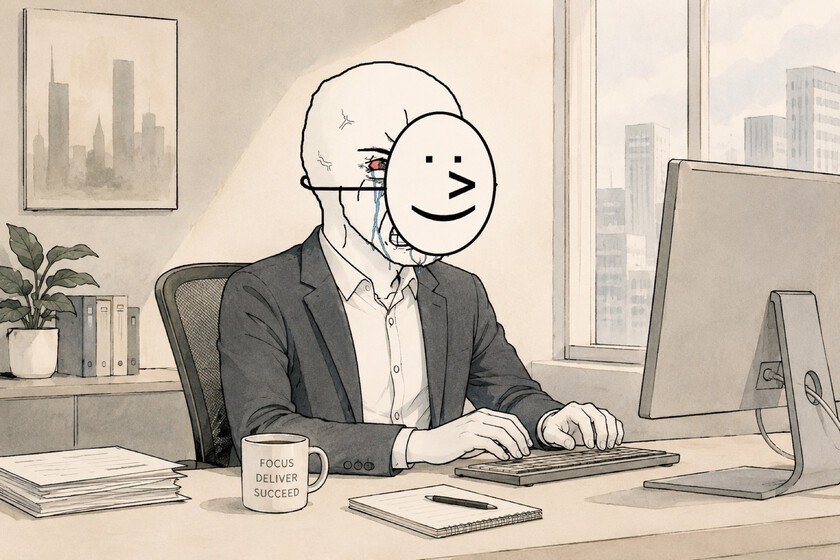

Companies have been monitoring their employees for a long time. First was the signing. Then the logging of keystrokes and mouse activity. And now a new generation of emotional monitoring software Analyze if the expression on your face while you work is positive enough.

Is called “emotion AI” or affective computing. And it’s no longer science fiction.

Why is it important. Time control and of activity on screen They were the first battles between companies and workers in the digital era and in the stage of remote work that triggered the pandemic. This is the nextand it goes much deeper: it is no longer about measuring how much you work, but rather quantifying how you feel while you do it.

The context. Tools like MorphCast, HireVue or Slack integration called Aware They have been perfecting this type of analysis for years. Some scan video conferences in real time to detect levels of attention, emotion or positivity. Others process chat transcripts to infer the collective mood of a team. There are those that analyze the tone of voice of customer service agents call by call. MetLife, Burger King and McDonald’s They already use them or have at least tried them.

The global market of emotion AI It has reached 3 billion dollars and forecasts suggest that it will triple before 2030.

Yes, but. The scientific premise on which this entire industry is based has a problem: it is debatable. A good part of these systems are built on the theory of basic emotions of psychologist Paul Ekman, who postulates six universal emotions recognizable in the face of any person. This theory has been questioned by the academic community for some time.

Neuroscientist Lisa Feldman Barrett defend that facial gestures do not have an intrinsic emotional meaning, but rather a relational one. They depend on the context, the culture, the physiology of each person.

- Someone who frowns because they are concentrating may be labeled as angry.

- An employee who expresses sadness when talking to a patient may receive a penalty for lack of warmth.

The LLM and visual recognition systems replicate the biases of the data with which they are trained: a 2018 study found that an emotional recognition AI consistently rated black NBA players as angrier than their white teammates, even when they smiled.

Between the lines. There is a clear market logic behind all this. Writer Cory Doctorow theorized it: the most extractive technologies reach the most vulnerable workers first, become normalized, and then move up. Truck drivers first, police officers call center later… and office workers, now.

The EU banned emotion AI in the workplace in your AI Lawexcept for medical or security purposes. It was the exception, not the rule. In the United States, The legislation gives employers a very wide margin to monitor virtually anything a worker does on company time, property or devices.

And now what. The companies that sell these tools argue that humans are biased too, that a boss’s subjective impressions are just as fallible as an algorithm.

It’s a good argument, but something changes when emotional monitoring is automated: it’s no longer a boss who senses that you’re low on Monday. It is a system that analyzes 100% of your interactions and records everything.

In Xataka | The real impact of AI on the labor market in Spain already has its first figures: it is not good news

Featured image | Xataka

GIPHY App Key not set. Please check settings