Let’s explain to you what it is and how to get a ChatGPT API. This is an essential code to be able to link the models of artificial intelligence GPT when developing an app or creating a workflow that includes them. Because in order for a third-party project to use GPT, you will need this key.

We are going to start this article by explaining what it is and what it is for. API of ChatGPT, trying to make sure anyone can understand the concept. And then, we will tell you step by step how to get your OpenAI API to use GPT models in your projects.

What is the ChatGPT API and what is it for?

ChatGPT is the name of OpenAI’s artificial intelligence chat. But basically, it is an intermediary to interact with their artificial intelligence model, which is called GPT. With each new version of GPT, ChatGPT gains new features and power.

We can say that GPT is the engine, and therefore you will also be able to link it to other third-party services, in addition to your applications. Thus, these apps or services will be able to knock on GPT’s door and return results to you.

However, for a service to use GPT, a bridge is needed, an intermediary, and that is where an API or application programming interface comes into play. APIs are a kind of communication bridge between an app and an external servicein this case the API serves to connect other applications with ChatGPT, or rather, the artificial intelligence model that powers it.

To give an example, imagine that I want to create an artificial intelligence bot. Within this bot I would need an AI model, an engine that processed my requests. But of course, an artificial intelligence model can weigh gigas or terabytes, and I can’t afford to include it within the app. Then I will have to connect the bot with an external AI hosted on its own servers.

The idea would be that when you write something to my bot, this bot sends my message to the AI, and that when the AI generates the response it reaches the bot and it can show it to me. And since the bot and the AI are on different servers, possibly in different countries, I’m going to need a bridge. And this bridge is the API.

The GPT API is paid. You can create it for free, but then you will need to purchase token packs. This is like purchasing credit packages to use the API. When you make a request, depending on the processing it requires and the task involved, you will spend these tokens or tokens, and when you run out you will have to buy more.

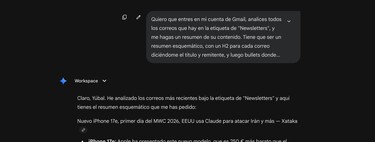

But in exchange, what you have is the possibility of use ChatGPT in your projects to create your own chatbot or assistant, to automate tasks, to analyze texts, videos or audio and make transcriptions and summary, generate code, and ultimately for whatever you need. You will have the AI within the application, but not natively, but you will have connected both.

Token types and API prices

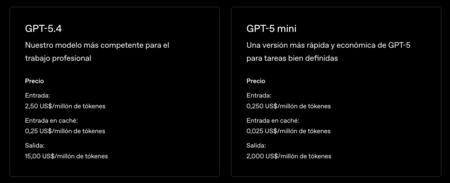

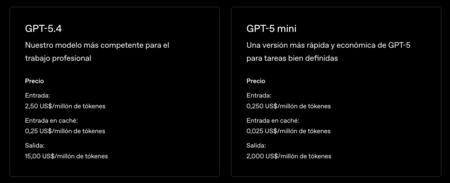

The price of GPT APIs It depends on the model you want to use.. If you are going to use the most powerful model it will be more expensive, since it is a model that consumes more resources. Meanwhile, the lighter models will have lower prices. There are three types of tokensso it is advisable to know them all.

Entry tokens They are the ones that you send to the model with prompts, instructions, texts to analyze or contexts that you add. For example, the prompt “Summarize this article on artificial intelligence in 5 lines” has 200 tokens, and costs 200 entry.

Exit Tokens They are what the model generates as a response. Come on, what consumes the text or image with which you are responded to. If an answer has 500 tokens, these are how many you spend. These tokens are more expensive because the model has to generate the new text, which involves a computational calculation.

Cache entry tokens are when you repeat the same context in multiple calls. When you have a conversation in the same chat and on the same topic, the system temporarily saves everything you said before so as not to process everything completely.

As for the price, it depends on whether you use a full or lighter model. This is a table with prices of current flagship models. You will also be able to generate APIs for older models, which will have cheaper prices.

|

Model |

GPT-5.4 |

GPT-5mini |

|---|---|---|

|

Description |

Our most competent model for professional work |

A faster and cheaper version of GPT-5 for well-defined tasks |

|

Entry tokens |

$2.50/million tokens |

$0.250/million tokens |

|

Output tokens |

$15/million tokens |

$2/million tokens |

|

Tokens cached entry |

$0.25/million tokens |

$0.025/million tokens |

When you generate an API, you will be able to buy token packs of the three types, which They will be spent as you use the API. So, when the tokens of one of the types run out you will have to proceed to buy more.

How to get your GPT API

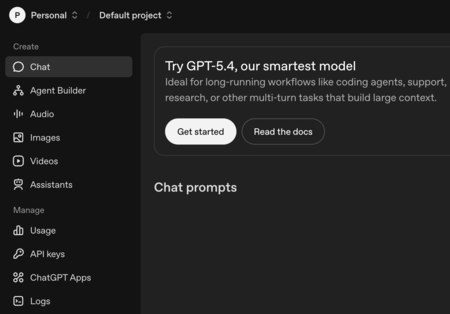

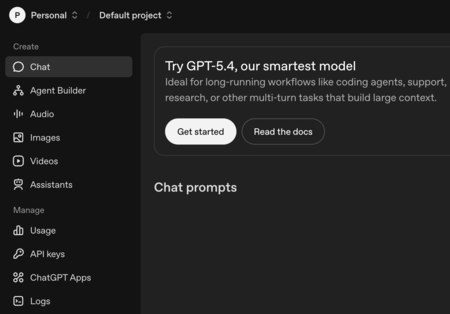

To obtain your GPT API you will have to enter platform.openai.com/chat and log in with your ChatGPT account. Here in the left column press where it says API keyswithin the section Manage.

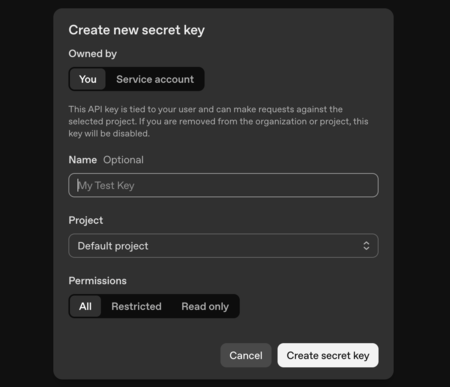

Inside this page press where it says Create new secret key. This will take you to a screen where you can configure your API, linking it to a project, giving it a name or configuring its restrictions if you want them to have them. When you have it to your liking, click on Create secret key.

And that’s it. with this you will create an APIor as the platform calls it, Secret key or secret key. This is the key you will have to enter when you want to link GPT to a third-party service.

In Xataka Basics | ChatGPT apps: what they are and how to use them to give ChatGPT more features

GIPHY App Key not set. Please check settings