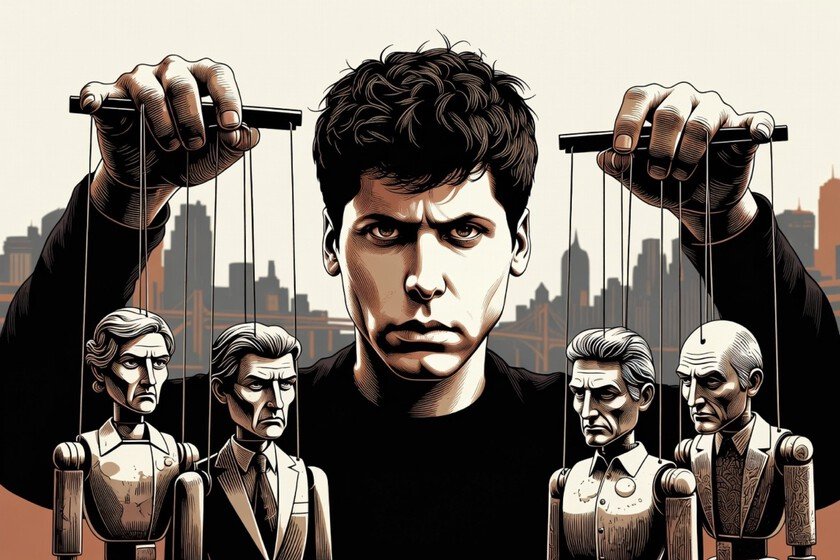

Sam Altman sat down over the weekend before his audience at X to answer questions about the agreement that OpenAI has just signed with the United States War Department. What came out of that session was a beautiful involuntary x-ray of the biggest contradiction in the sector at the moment.

Why is it important. The CEO of OpenAI said he is terrified of “a world where AI companies act as if they have more power than the government.” The phrase sounds good, it is marketinian and seeks to elevate OpenAI’s position as a powerful but very responsible and honest group.

The problem is the context in which he pronounces it: hours before OpenAI signed that agreement, The US government labeled Anthropic, its direct rival, a “supply chain risk” for refusing to sign under those same conditions. Altman went to put out the fire just as someone accused him of setting it.

Between the lines. Altman’s speech rests on a premise that must be monitored: that a democratically elected government must always prevail over unelected private companies. It is a philosophically reasonable position, but he applies it selectively.

Altman acknowledged that the deal “was rushed and the picture is not good,” and that OpenAI moved quickly to “de-escalate” tension between the Pentagon and industry. In other words, your company made a unilateral strategic decision about how the entire AI industry should relate to the military establishment. That doesn’t exactly sound like institutional deference.

The contrast. Anthropic opted for something different: requiring explicit safeguards against the use of its AI for mass surveillance or autonomous weapons. But the government penalized her. OpenAI accepted a more ambiguous formula (“for all legal uses”) and won the contract. Various OpenAI employees signed a letter supporting Anthropic’s position.

Claude became the most downloaded free application in the App Store that weekend from Apple, precisely surpassing ChatGPT. The market also has opinions.

Yes, but. It’s fair to admit that Altman’s position has some internal logic:

- If AI is going to be integrated into military systems anyway, it may be preferable that it do so under negotiated conditions rather than under coercion.

- And he’s right about one thing: The labeling of Anthropic as a supply chain risk, a tool intended for hostile foreign suppliers, applied to an American AI security company is, in his own words, “an extremely frightening precedent.”

The big question. Who really decides how AI is used in military contexts? The companies that build it, the governments that hire it, or the engineers who design it and who are increasingly organized to influence those decisions?

Altman says he believes in the democratic process. But OpenAI negotiated privately, signed privately, and made only a fraction of the contract public. Democratic transparency starts there.

In Xataka | Anthropic has become the Apple of our era and OpenAI our Microsoft: a story of love and hate

Featured image | Xataka

GIPHY App Key not set. Please check settings