A Mecano’s great song —I know, this is very Kiss FM—he said that ‘the face you see is a Signal ad’. And in case any of our painfully young readers don’t know, Signal is a brand of toothpaste. And if there is anyone whose face is exactly like that, it is Sam Altman, CEO of OpenAI, who with a perfect and convincing smile tries to convince the world that his company is just as perfect and convincing.

For many people, today is not the case.

what has happened. These days we have seen how the US and its Department of Defense (or War, as they like to call it now) have decided that if any AI company wants to work with them, they are going to have to let them use the AI as they see fit. That we have to massively spy on people? He spies on her, totally, we have already done it. What should we tell AI to develop lethal autonomous weapons? Well too.

Anthropic stands. But lo and behold, precisely the company that was working with the Pentagon He said that oranges from China. Anthropic, which had been collaborating with the Government for months—Claude was used for the arrest of Nicolás Maduro—, has made it clear that there are red lines that he will not cross.

If Anthropic doesn’t want to, let OpenAI do it. At the Pentagon they have threatened to turn Anthropic into a pariah company, but at the moment they have not made any official move. What has happened is that the US Government has decided to change its technological partner. OpenAI has replaced Anthropic and appears to have reached an agreement to work with US defense and security agencies.

Sam Altman seizes the opportunity. This has been indicated by Sam Altman, who in an ad on Twitter (I still resist calling her “X”) explained that her company had agreed deploy their models on the US War Department’s classified network. The curious thing is that this agreement establishes the same red lines that Anthropic had: no espionage on American citizens and no autonomous weapons. In the official announcement they even highlight that their agreement “has more safeguards than any previous agreement for classified AI deployments, including Anthropic’s.” There is, for example, one more requirement: that their models not be used for “social credit” systems with which citizens are rated based on the information collected from them.

But. Although both Sam Altman and the company’s blog appear to place limits on the War Department’s use of its AI, the terms of that agreement contradict Altman’s claims. The announcement mentions a specific paragraph of the agreement that explicitly states the following:

The War Department may use the AI system for all lawful purposes, consistent with applicable law, operational requirements, and well-established security and oversight protocols. “The AI system will not be used to independently direct autonomous weapons in any case where human control is required by law, regulation or Department policy, nor will it be used to make other high-risk decisions that require approval from a similarly competent human decision-maker.”

Mass spying on American citizens is legal in certain scenarios as part of the Patriot Act that was passed after the 9/11 attacks, and that would allow AI to process data and communications collected by mass surveillance systems. Jeremy Lewin, a State Department official, has indicated that this agreement “flows from the pillar of ‘all legitimate use'”, and points out that what Altman proposes regarding red lines is not as clear-cut as it seems.

Internal protests. Last Friday at 5:01 p.m., Anthropic was due to accept the Pentagon’s terms, but it did not do so. During that morning, several OpenAI and Google employees showed their support for the ethical and moral positioning of the rival company, and almost 800 of them (681 from Google, 96 from OpenAI) signed an open letter entitled “We will not be divided.”

Altman says one thing, does another. In an interview with CNBCSam Altman said on CNBC that despite all the differences he has with Anthropic, “I trust them as a company, and I think they really care about safety.” On Thursday, the CEO of OpenAI sent an internal statement expressing his desire for “things to de-escalate between Anthropic and the Department of Defense.” The message came to nothing less than two days later, when he announced the agreement with the same Department.

Altman says one thing, does another. In an interview with CNBCSam Altman said on CNBC that despite all the differences he has with Anthropic, “I trust them as a company, and I think they really care about safety.” On Thursday, the CEO of OpenAI sent an internal statement expressing his desire for “things to de-escalate between Anthropic and the Department of Defense.” The message came to nothing less than two days later, when he announced the agreement with the same Department.

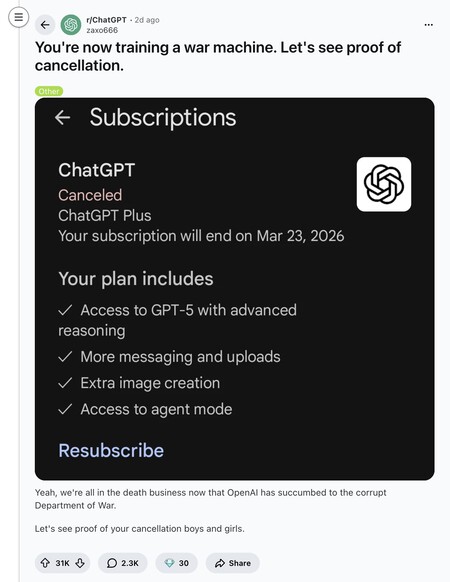

The world against OpenAI. Many have ended up criticizing OpenAI’s way of acting on social networks. On Reddit they appeared several messages that encouraged users to “Cancel ChatGPT” with thousands of positive votes and also thousands of comments in which the tone was indignant with the way in which OpenAI and Sam Altman have taken advantage of this circumstance. We have seen critical movements in the past —Facebook, Netflix—, but it usually happens that after these first moments, companies end up recovering from the criticism and even come out stronger for a simple reason: Human beings have very bad memories.

In Xataka | OpenAI has a problem: Anthropic is succeeding right where the most money is at stake

GIPHY App Key not set. Please check settings