Hubble made us believe that this exoplanet was impossible. James Webb just explained why we were wrong

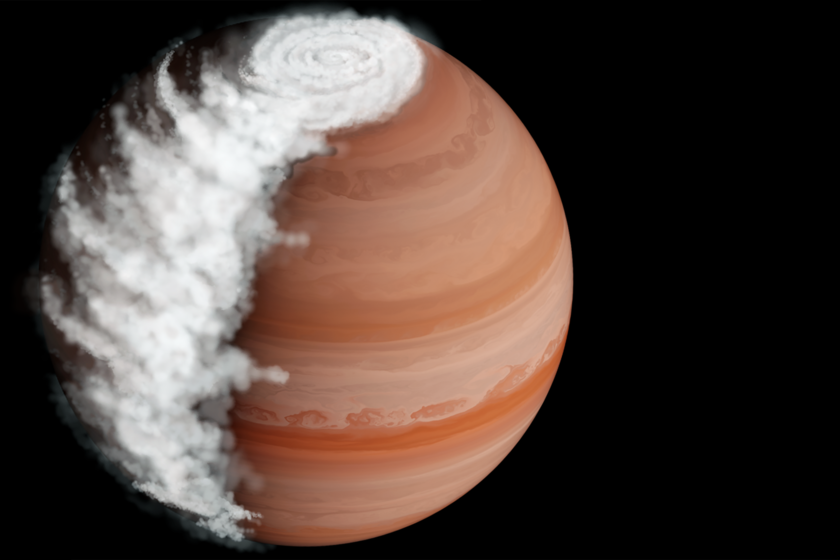

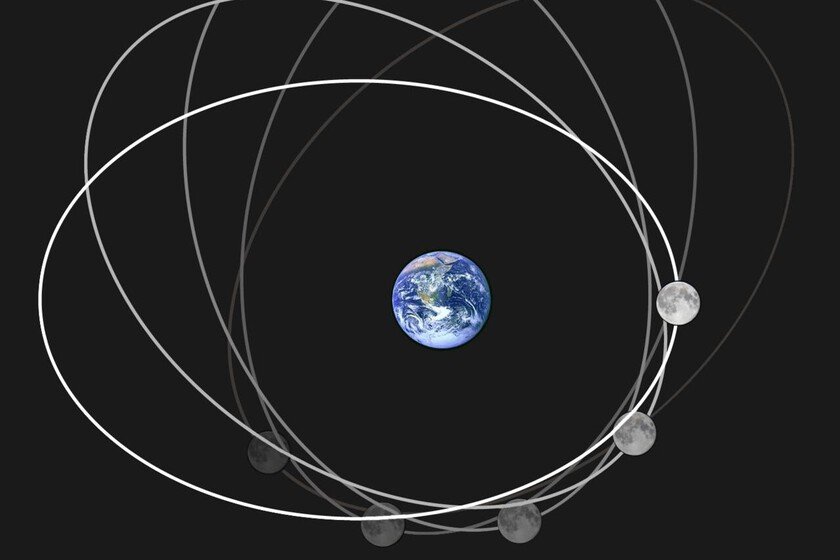

In 2014, the exoplanet WASP-94A b was discovered, a hot Jupiter with an anomalous amount of oxygen and carbon in its atmosphere. The first observations pointed to hundreds of times more of these two gases than in the atmosphere of the Solar System’s Jupiter. This did not fit with standard models of planetary formation. It could be that there is some error in the models. However, according to what has just been verified with the James Webb Space Telescope, the problem was rather that the right telescope was not being used. Closer observation has shown that oxygen and carbon levels are actually much lower, consistent with known physics. Also, as a tip, something very curious has been discovered: that the planet has rocky clouds during the day that disappear when sunset arrives. A very useful transit. The authors of a study recently published in Science They took advantage of a transit of the planet in front of its star to study its atmosphere with the James Webb telescope. Previously, observations were made with the Hubble telescope. With it, the light spectra coming from the atmosphere could be analyzed and, with them, their composition could be established. However, since it was not a telescope capable of distinguish clouds from the rest of the atmospherethe calculations were an average of the gases of everything together. Said by one of the authors of the studywith Hubble the result was something like looking through a foggy window. Now, after giving the window glass a good look, they have been able to see exactly the composition of both the atmosphere and the clouds. Tidal lock. This exoplanet is tidally locked. This means which takes the same time to orbit its star as it does around itself. The result is that it always has the same face facing the star, so on half the planet it is always day and on the other half it is always night. It’s something like what happens to us on Earth with the Moon, which always has a hidden side for us. Despite having perpetual days and nights on each face, on this type of planets you can distinguish between sunrise and sunset, depending on the flow of gases in the atmosphere. The limit at which cold gases from the night side pass to the day side is considered the dawn of the planet, while the limb in which the opposite occurs is sunset. Different compositions. When observing the planet in full transit, the day side could not be seen, since it was looking towards the star. On the other hand, the James Webb has been able to capture the emissions from the two limits with the night side, considered sunrise and sunset. In this way, he has been able to verify two important pieces of information. On the one hand, what we mentioned: the levels of carbon and oxygen in the atmosphere are only five times higher than those of Jupiter. It is something that corresponds to other hot Jupiters and does not defy known physics. On the other hand, it has been seen that on the sunrise side there are clouds composed of silicates. That is, rocky clouds. However, these dissipate until they disappear on the evening side. Thanks to this duality, it has been possible to explore the pure atmosphere, with hardly any clouds, in the area of the planet close to sunset. Unknown causes. The authors of the study do not know what causes this strange behavior of the clouds. However, they have two hypotheses. The first would be something similar to the process that gives rise to fog on Earth. The clouds would form in the darkness on the night side, then enter the intense heat of more than 1,000 degrees on the day side. The substances that make up the clouds would boil and the clouds would vaporize throughout the day, disappearing completely at night. Then, on the night side, the process begins again. The other hypothesis, on the other hand, suggests that there may be intense winds on the planet that are dragging the clouds into the interior of the planet and taking them out of sight by sunset. And now what? These scientists are already studying other hot Jupiters. At the moment, they have already detected two others with the same distinctive cloud cycle: WASP-39 by WASP-17 b. There is nothing like a good sample to properly study any scientific phenomenon. The more planets that are detected with the same circumstances, the better the reasons can be clarified. Image| John Hopkins In Xataka | The James Webb has broken another historical record: a supermassive black hole older than expected