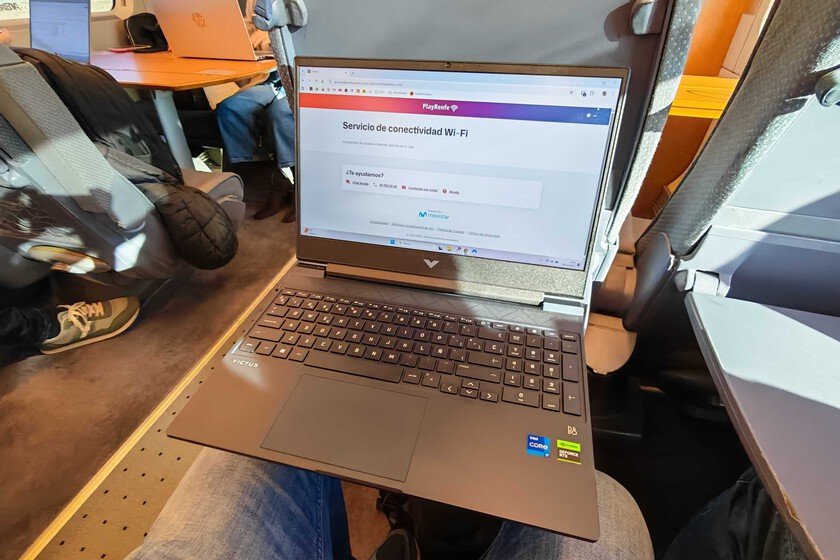

In case anyone is confused, today are the Xataka NordVPN Awards 2025. That means that the editorial team travels from our respective cities to Madrid, mostly by train. We are very hard-working people and we always take advantage of the trip to write an article, or at least we try when Renfe’s WiFi allows us to. We are Amparo Babiloni and Jose García, join us in this sad story. These lines are written by me, Amparo Babiloni, on the Valencia-Madrid AVE on Thursday, November 20 and connected to the Play Renfe network. I like the risk. The simple fact of connecting and being able to start working (halfway) has been an ordeal. How to improve WiFi at home To give you an idea: I got on the train at 8:30 and I wasn’t able to start writing this until almost an hour later. Just logging into the administrator took me about ten minutes and opening the draft at least five more. Slack does not work directly, neither in the app nor in the browser. Jose here. I left Córdoba at 8:33 and I intended to take advantage of the trip to do some work. The departure from Córdoba has been terrible, since it passes through areas with many tunnels, then mountains and then we enter a network wasteland such as Castilla-La Mancha. I don’t know what happens in Castilla-La Mancha, but that stretch is terrible. Not only does the WiFi network not work, but the coverage is terrible. Good. Connecting to the VPN is an impossible mission. In addition to having to confirm that I trust the network certificates, it is impossible to take advantage of the WiFi network and have the VPN activated. In fact, I write this with the VPN disabled, something that gives me some respect on a public network. Ah yes, happy to accept this. During the first hour of the trip I completely depended on the mobile network to write an article and respond to some important emails. Thank goodness I uploaded the images yesterday from home, because having had to upload 30 JPEGs of six megas I might as well have started crying. Slack was only half loading (I couldn’t see my colleagues’ profile photos) and this article is being coordinated by Amparo and I in the best way possible. Amparo is offline, I hope she’s okay. It’s 10:13. They just told me over the public address system that there is an incident at the entrance to Madrid, so I find myself half an hour from Madrid completely stopped 🤷♂️ Dizzying speeds Amparo returns. The first leg of the trip I suffered quite a few outages, but now it seems that the network has more or less stabilized and I have been able to write all this in one go. But let’s see what a speed test tells us. The image weighs 13.9KB. It took more than a minute to upload This is the download and upload speed while passing through Castilla-La Mancha. One thing that both Jose and I have noticed is that the network is better as we get closer to Madrid, probably because there are more antennas. This contrasts with what we live in 2016 when we tried Renfe WiFi for the first time. At that time we found “a very good connection speed, with peaks of 53 Mb/s for both upload and download, and with minimums of 9 Mb/s for download and 13 Mb/s for upload in an area with little coverage.” (My connection has been cut here) It’s back, but it took me a while to be able to continue writing because every time I open any new tab it takes an average of 2-3 minutes to load, that is if it doesn’t freeze. The speed entering Chamartín. I have repeated the test by entering the station and the download speed still does not even reach 2MB. In fact, it’s even worse than when I was further away from the city. I have to leave you now, we just arrived. At least this time it wasn’t due to a network outage. Hello, I’m Jose. It’s 10:29, I’m still standing half an hour from Madrid. The train driver is being very considerate in informing us of the situation. The issue seems resolved, but now the entrance is congested. ADIF has not yet given an estimate of the duration of the stoppage, so until further notice, we are still here. Right now, half an hour from Madrid, the network is stable, although the speed barely exceeds 1 Mbps. I have tried to liven up the wait by watching a video about the new Bambu Lab 3D printer, but it was not a good idea. All videos load by default in 240p. If I increase the resolution, the video stops and stays in an infinite loading loop. I could resort to a PlayRenfe movie, but since November 1 They are no longer available. The thing is that I have a 5G network on my mobile (at a speed of 15 Mbps, let’s not go crazy either), so it definitely seems like a problem with the train’s own WiFi network. The cell phone tells us that it is not a WiFi 6 network (which would help with congestion), but the underlying problem could be a host of things. A possible cause A possible origin of the problem is that the desire to eat and hunger come together. First of all, you have a low-speed network that is not prepared for support the huge number of devices that there is a train consuming bandwidth. We are writing this text, but there may be people watching TikTok, YouTube or doing more demanding things. (It’s 10:32, the train is moving again) Secondly, trains cannot escape the laws of physics. The Córdoba-Madrid AVE is currently moving at 248 km/h and the Doppler effect does his thing. As we see each other, the signal intensity constantly changes and the systems must compensate for these variations. The faster … Read more