list of new features of the new version of Anthropic’s Artificial Intelligence model

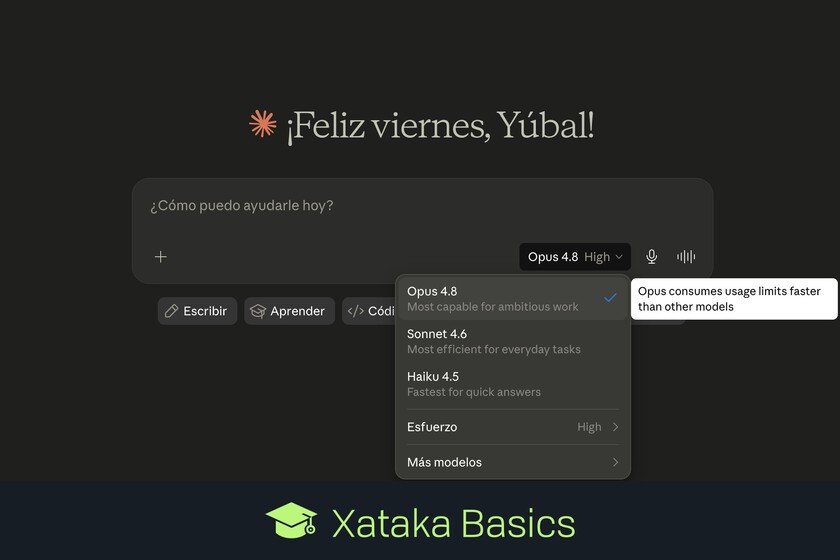

Let’s tell you What’s new in Claude 4.8 Opus, the new version of Anthropic’s most advanced and powerful artificial intelligence model. This version has surprised us by arriving just 41 days after Claude Opus 4.7, and it seems that the improvements are minimal, but there is a really important change in its honesty when it comes to telling you if it doesn’t know something. In any case, here you have a complete list with all the new features that come with this new version of Claude 4.8 Opus. We are going to explain each of them briefly so that they are easy to understand. Another thing you should know is that Opus is the most advanced line of Claude models, the one indicated for more complex tasks for programming and the one that uses up your limits the fastest when you use it. There is also the most efficient Sonnet model for day-to-day tasks, which continues in version 4.6 since February 2026, and a Haiku for quick and simple questions that continues in version 4.5 since October 2025. News from Claude 4.8 Opus A more honest AI: The prominence of this new version goes to honesty. He’s significantly more honest about his own work, telling you when he’s unsure about something. It’s also about four times less likely to let bugs in code slip by without flagging them, compared to its predecessor. Performance improvements: The Agentic code score for creating code with agents increases from 64.3% to 69.2%, and multidisciplinary reasoning with tools increases from 54.7% to 57.9%. On other test benchesin the SWE-bench Verified it goes from 87.6% (Opus 4.7) to 88.6%, and in Terminal-Bench 2.1 it rises from 66.1% to 74.6%. GPT-5.5 still falls short in terminal/CLI workflows, although there have been big improvements in Claude, and both models are practically on par in web browsing and graduate-level science topics. Alignment improvements: Alignment assessments show new highs in prosocial traits such as supporting user autonomy and acting in their best interest. Rates of misaligned behavior such as cheating are lower than in Opus 4.7. Fewer hallucinations: As usual, the number of hallucinations is also reduced. Honesty when telling yourself when you don’t know something also helps reduce them. Quick mode: According to AnthropicOpus 4.8’s fast mode is now about 2.5 times faster. The company claims that the improved Quick Mode also costs three times less than before. Effort control– Users can choose between “extra” or “max” levels so that the model spends more tokens and obtains better results. Dynamic Workflows (preview for research): With this new feature, Claude can schedule work and run hundreds of subagents in parallel in a single session, being able to complete codebase-scale migrations of hundreds of thousands of lines. Available for Claude Code on Enterprise, Team and Max plans. No change in base price: The base price of API tokens is unchanged from Opus 4.7. It is 5 dollars per million input tokens, and 25 dollars per million output, with up to 90% savings with prompt caching and 50% with batch processing. In Xataka Basics | How to prevent AI from always being right by default and thus make Claude, Gemini and ChatGPT have fewer hallucinations