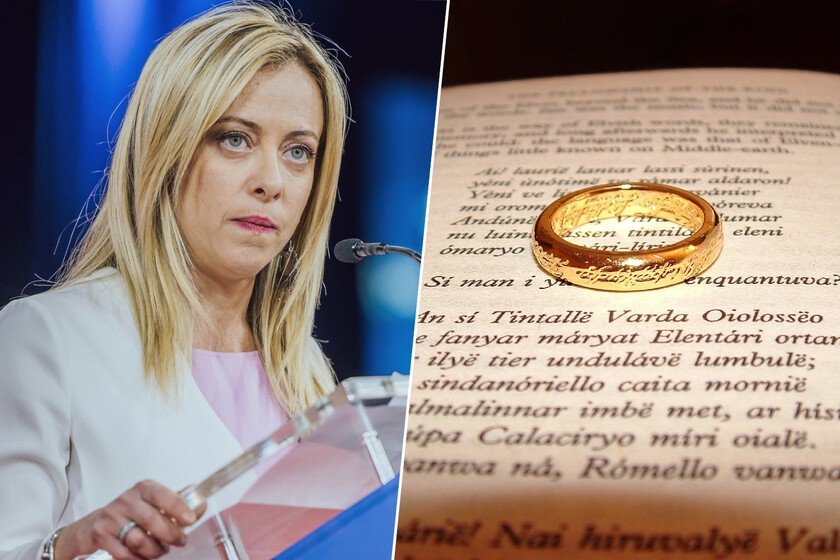

The Italian far-right was looking for a way to clean up its image. He found the formula in ‘The Lord of the Rings’

In November 2023, Italy’s Prime Minister Giorgia Meloni opened an exhibition on JRR Tolkien at the National Gallery of Modern Art in Rome, organized by her Ministry of Culture. Nothing unusual, except that Meloni has been understanding ‘the lord of the rings‘ as a “sacred text.” This is how the legendary British fantasy trilogy ended up becoming a political catechism for the Italian extreme right. We started badly. The first Italian edition of ‘The Lord of the Rings’ was published in 1970, with a prologue signed by the philosopher Elémire Zolla, who interpreted the work as an allegory of “pure” communities threatened by foreign invaders. For the youth of Italian Social Movement (the MSI, a party founded in 1946 by veterans of Mussolini’s fascism) that reading was an enlightenment. As he noted in his 1975 review of the book far-right youth leader Marco Tarchi, the work was perfect for the young right precisely because it did not carry the weight of fascist history. Relocation sought. The MSI had been trying for years to reframe its identity in a country where the left dominated culturally, with the old fascism logically stigmatized. They needed something new to renew the symbols. He imagined universe by Tolkien gave them the opportunity to articulate a political identity around values of virtue and anti-modernity, values that Julius Evola, the ultra-nationalist mystical philosopher who advocated a racial hierarchy of pagan and aristocratic lineage, had already been preaching for decades. Fascist Woodstock. In 1977, leaders of MSI (and, above all, its youth faction) organized what would be known as Hobbit Camps. The first was held for two days in southern Italy and brought together young people from all over the country. On the surface, the event had the look of a folk music festival: stages with performances, tents, booths with books and T-shirts. Of course, a dozen muscular boys maintained order, and they were distinguished by wearing bracelets with a Celtic cross. Calling them Hobbit Camp, they wanted to attendees will identify with these small beings: conservative, rooted in tradition, reticent to change and foreigners… The group did not hide its affiliation: flags with Celtic crosses flew in perfect harmony with the Tolkienian aesthetic, the band Compagnia dell’Anello (that is, “Fellowship of the Ring”) played songs about the good old European identity. His anthem, in fact, was ‘Il domani appartiene a noi’ (“Tomorrow belongs to us”), whose title was a deliberate replica of the shocking song of the Hitler Youth in ‘Cabaret‘, titled ‘Tomorrow belongs to me’. A women’s magazine called ‘Éowyn’ was also launched, in honor of the princess of Rohan. These camps They stopped being celebrated in 1981when they had fulfilled their function as spaces of recruitment and indoctrination, hidden under a layer of celebration of popular culture. Meloni the cosplayer. Meloni was four years old in 1981. But a decade later he attended the revival of these camps: Hobbit 93, held in Rome, where he sang with the band Compagnia dell’Anello. He had come to Tolkien at age 11 and joined the MSI youth team shortly after. As a youth activist, Meloni and his group of militants gave themselves Tolkienian nicknames, visited high schools in disguise to catch the kids, and met at the “blowing of Boromir’s horn” to hold thematic talks on political recruitment. In her autobiography ‘Io sono Giorgia‘, published in 2021, Meloni described Sam as his favorite hobbit: neither strong, nor fast, nor majestic, an ordinary hobbit but without whose help Frodo would never have completed his mission. A metaphor for the transformative power of ordinary people. sacred text. The admiration has not diminished over the years. Meloni has said that he considers ‘The Lord of the Rings’ not a fantasy, but a sacred text. In an interview with ‘The New York Times’ in 2022 he declared that “Tolkien can explain better than we can what conservatives believe.” On the night of the general election he won, his sister Arianna posted a celebratory letter on Facebook full of Tolkien references. And at the final campaign rally, the actor Pino Insegno, the Italian voice of Aragorn, introduced her to the public by reproducing the character’s speech in front of the Gates of Mordor. It is not the only fantastic reference that Meloni handles: the political festival that the leader founded, which attracts figures like Elon Musk or Viktor Orbán and which has been defined as the largest event of the European conservative current, is called Atrejuin honor of the hero of ‘The Neverending Story’. Tolkien Expo. In November 2023, Meloni inaugurated the exhibition ‘Tolkien: man, teacher, author’ in Rome, organized by his Ministry of Culture to commemorate the fifty years since the writer’s death. Criticism was abundant: several analysts pointed out the conflict of interest of a government with post-fascist roots dedicating public resources to praising the book that had served as a catechism for its predecessors. Some nuances. Not all analysts see Tolkien’s importance in the foundations of the new Italian extreme right as so central, even though Meloni does show herself to be a strong devotee of the text. The political scientist Piero Ignazi pointed outfor example, that the Hobbit Camps were organized by a minority faction of the MSI, and that the focus on hobbits and elves is part of Meloni’s personal communication strategy: the image of a woman less aggressive than other far-right leaders, with accessible cultural references. But is Tolkien a fascist? As for whether The Lord of the Rings is right-wing, just remember that Tolkien wrote the trilogy during the rise of Nazism and fascism and refused to publish ‘The Hobbit’ in German when They asked him to prove Aryan descent. Possibly he would have been repelled by the idea of hobbits being read as opposed to change and devoted to preserving traditions. Even so, his work continues to serve as a basis for dubious movements: as he analyzed Arc Magazine In 2025, sectors of the technological right of Silicon Valley, aligned with the MAGA wing, … Read more