The best AI agents that are faster and easier to use to do tasks for you without complications or long installations

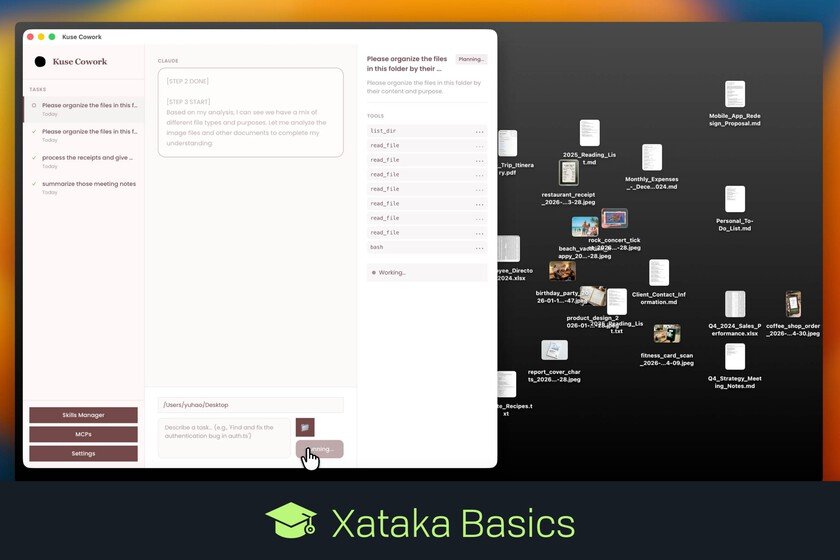

Let’s tell you the best fast and easy AI agents to use, without complicated installations and configurations. This type of AI agent They are less complete and powerful than the more complete and advanced ones, but they allow you to explore how the artificial intelligence can do tasks for you. We are going to make a small list to stick to the best alternatives. Many are quite popular, others are more unknown, and we even ended up with an open source alternative for privacy lovers. Claude Cowork Claude Cowork It is possibly the best and simplest tool to test the benefits of a medicine, but in a controlled way. It is a paid feature that you can use within the desktop application of Claude. The price to use it starts at 15 euros per month. Claude Cowork allows Claude’s AI to manage files and use applications on your computer. You tell him what you want, and Claude will find the best way to do it. Also, if you install the extension Claude in Chrome in your browser, Cowork will also be able to do things for you in the browser. Perplexity Comet Comet is the browser with artificial intelligence Perplexitya platform that started as a search engine based on artificial intelligence, and now it is much more. It is now a chatbot that allows you to use various artificial intelligence models, such as Gemini, GPT or Claude. The Comet browser has the peculiarity that can use AI to do tasks for yousuch as browsing you, interacting with websites, automating tasks, searching and filtering information, managing workflows and other tasks such as comparing prices on multiple pages. Manus on Telegram Manus is an autonomous AI agent, to which you can give a high-level objective and it works on its own to achieve it. Tasks are asynchronous, so you can ask it to do something, turn off the computer, and receive a notification when the work is completed. Manus also has the ability to used in Telegram chats like a bot With this, you will be able to use Manus directly from the messaging app and without entering its official website or application, and then you will be able to access them to see the result of AI research, web development, design, whatever you have asked. ChatGPT Agent ChatGPT also has an agent mode in your application. With it, you will be able to interact directly on web pages, ChatGPT will act on your behalf to book appointments, create presentations and perform other complex tasks. Of course, to use it you will need have a paid subscription in AI. Genspark This platform is a kind of all-in-one AI worksspace. It is not exactly a chatbot but acts in a similar way to the concept of an agent, planning taskschoosing the correct tools to do it, and chaining the steps autonomously. With this tool you will be able to create applications, documents, designs, images, music, spreadsheets and more. It has a free plan with limited access, although you will have to pay to access everything. Also has more than 80 toolsand eight language models of different sizes, each for a task. AgentGPT This was one of the first services to make AI agents accessible from the browser without having to install anything. It works similar to the previous ones, you have to write what you want with natural language, the agent divides this into subtasks, and then executes them autonomously. Kuse Cowork Kuse is an open source alternative to use an agent capable of helping you perform tasks on your computer. It can generate documents and presentations, transform d oc files, PDFs, you can also create mind maps, interact with YouTube videos and more. It is therefore an open alternative to Claude Cowork, where you can decide which AI models to use attaching them with their API, or even installing them directly on your computer. In Xataka Basics | How to create a Telegram bot that sends you a summary made by Gemini of each email you receive in Gmail and other emails