OpenClaw is the total AI agent that challenged Big Tech. Big Tech’s response: buy it, of course

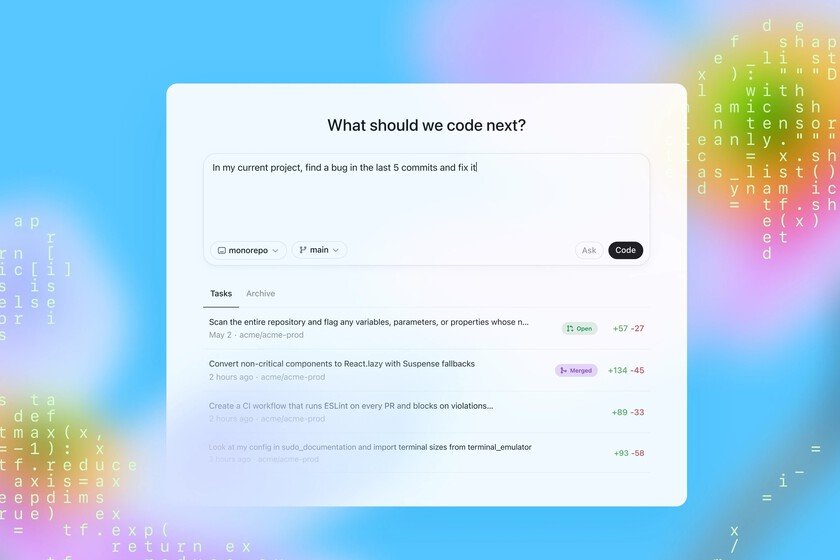

Peter Steinberger It was a great unknown to the vast majority of the planet until less than a month ago. His project, which he initially called Clawdbot (later Moltbot and finally OpenClaw), became the new sensation of the internet and the world of AI. Its growth has been so spectacular that the majors in this segment set their eyes on it and, inevitably, began to fight to sign its creator and acquire his project. We already have a winner of that bid: OpenAI. What is OpenClaw. OpenClaw is what we could define as “the total AI agent.” A system that uses one or more AI models such as those from OpenAI, Anthropic or Google to do things for you. Here are some differences from using those models in a “traditional” way: You can chat with your AI agent using messaging apps like Telegram or WhatsApp, as if it were just another contact OpenClaw takes full control of the machine you install it on, whether it’s an old PC, a Raspberry Pi or a VPS, for example. You have permission to do whatever you want inside that machine, which also involves risks The capacity of current models, such as Opus 4.5, makes the agent certainly autonomous and proactive and, for example, suggests things to you or makes decisions based on the conversations you have with him? she? it? OpenAI buys OpenClaw. Last week Steinberger I already commented in an interview with Lex Fridman that OpenAI and Meta had made offers to sign him and acquire his project. Those intentions crystallized on Saturday, when the creator of OpenClaw advertisement that he had signed with OpenAI and that the OpenClaw project “will become managed by a foundation and will remain open and independent.” It was a more than reasonable exit for Steinberger, who will probably have received a significant sum of money and prestige, but that leads us to the eternal question: can you compete with the big companies? Short answer: probably not. Large companies have always been hampered by their own size when it comes to reacting quickly to new trends, and even the largest AI companies suffer from this same problem. OpenClaw was doing something that none of them had dared to do – partly because this type of agent has too much “power” – but with these projects and with startups that are beginning to emerge, the same thing always happens: either the big companies copy the idea and they end up burying the originalor they buy that startup that threatened to compete with them. For many startups, in fact, the “exit” or future strategy of the project happens to be bought by a large company. A creator who didn’t want to be CEO. Steinberger explained in his post how his project opened up “an endless string of possibilities” for him, and confessed that “yes, I could really see that OpenClaw could have become a giant company. But no, I’m not excited about that. I’m a creator at heart.” Steinberger has already created a company and dedicated 13 years of his life to it, and “what I want is to change the world, not create a big company, and partnering with OpenAI is the fastest way to bring this to the entire world.” One person’s first unicorn? The appearance of ChatGPT soon made will be spoken of the ‘Solo Unicorn’ phenomenon, a startup created by a single person and which, thanks to AI, would be valued at more than 1 billion dollars. We do not know what price OpenAI has paid for this signing, but it is likely that it will not reach that much. What does seem evident is that OpenClaw was the type of project and idea that certainly could have turned it into that “Solo Unicorn”. The era of custom AI agents. Sam Altman, CEO of OpenAI, confirmed the news in X. There it indicated that the creator of OpenClaw had joined OpenAI “to lead the next generation of personal agents”, and highlighted that “we expect this (personalized AI agents) to quickly become an integral part of our product offerings.” In addition, he assured that OpenClaw will remain open source, something that was probably one of the essential conditions that Steinberger set to join the ranks of OpenAI. And now what. That the project remains Open Source and independent is great news and theoretically that will allow OpenClaw to continue functioning as before, but having OpenAI’s resources can undoubtedly make it grow exceptionally. It remains to be seen whether that will end up having a negative impact in any way, but what also seems clear is that these types of “full AI agents” could soon also be an integral part of the offering of other AI companies. Welcome to the era of total AI agents. We had already partially seen what OpenClaw does with projects like Computer Use from Anthropic, Project Jarvis/Mariner by DeepM Mind u Operator from OpenAI itself. Both allowed AI would do things for us in the browser, but OpenClaw does things for us with all the applications on the machine on which we install it (the email client, the command console, etc.). We are facing an interesting stage for this type of systems. In Xataka | OpenClaw is one of the most fascinating and “dangerous” AIs of the moment. A Malaga company has come to the rescue