Kasparov succumbed to Deep Blue and that showed that machines could finally surpass humans. Then came defeats in other fields (Go, StarCraft), but always with algorithms as the protagonists. Now those who want to surpass us are the robots, and after some disappointments and also amazing previewsare wanting to conquer a sport that poses an exceptional challenge: tennis.

Be careful, Alcaraz, the robots are coming. Researchers from Tsinghua University and Peking University, among others, have collaborated to develop a robot capable of playing tennis. The project has been named LATENT (Learn Athlethic humanoid TEnnis skills from imperfect human Motion daTa) and it is surprising because the principle is very similar to that of developments like AlphaZero: the machine (the robot) practically learns to play by itself. We have already seen similar advances with sports like ping pong or with kung fu demonstrationsbut this milestone has been achieved in a different and striking way.

imperfect movements. Until now, getting a robot to react at the speed of a tennis ball was an almost insurmountable challenge due to the lack of perfect movement data, but the advances made by these researchers are especially striking. Especially since these machines now use “imperfect” information captured from humans to learn how to play.

Mini tennis. Capturing accurate data from a real tennis match is very expensive and complex due to the size of the court and the subtlety of the tennis players’ wrist movements. To solve this, the LATENT team chose to collect “primitive skills” data. That is, the robot was shown basic movements such as the forehand drive, backhand, or lateral movements. In addition, an area 17 times smaller than a professional court was used precisely to reduce the complexity of the initial system. The objective: that from there the robot could develop its own technique.

Learn from your mistakes. The striking thing about this development is that with those few data the robot was capable of making corrections on the fly when moving or hitting the ball. Thus, he was able to maintain the stability of his body following the style of human movements, but he was also able to finely adjust the angle of the racket to impact the ball appropriately.

No strange things. The researchers also wanted to prevent the robot from starting to “make up” strange movements during its reinforcement training. Thus, they created a technique that forced the AI to explore only human-like movements based on the initial data distribution.

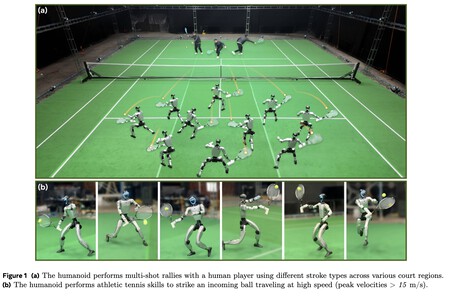

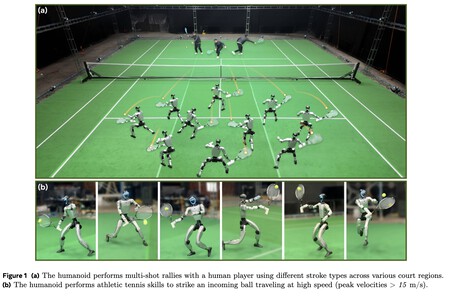

Unitree G1 already plays tennis. To translate their system into reality, the researchers installed this system on a Unitree G1 robot. This model of humanoid robot It has 29 degrees of freedom and a racket was attached using a 3D printed part. The physical tests were surprising: the G1 was able to return balls thrown at more than 15 m/s (54 km/h), but it was also able to maintain rallies with human players on a real court. The robot was capable of covering a large part of the court and dynamically adapting its posture according to the trajectory of the ball.

The beginning of something bigger. These tennis robots are very far from being able to compete with human players—much less with professionals—but they demonstrate that reinforcement learning techniques that have been applied in games such as chess or Go may be valid for physical environments with robots. In fact, this advance raises the possibility that robots can learn any physical discipline (whether sports or not) from a limited learning of basic movements.

In Xataka | And finally the human being beat, with much drama, a robot playing ping pong

GIPHY App Key not set. Please check settings